Blog

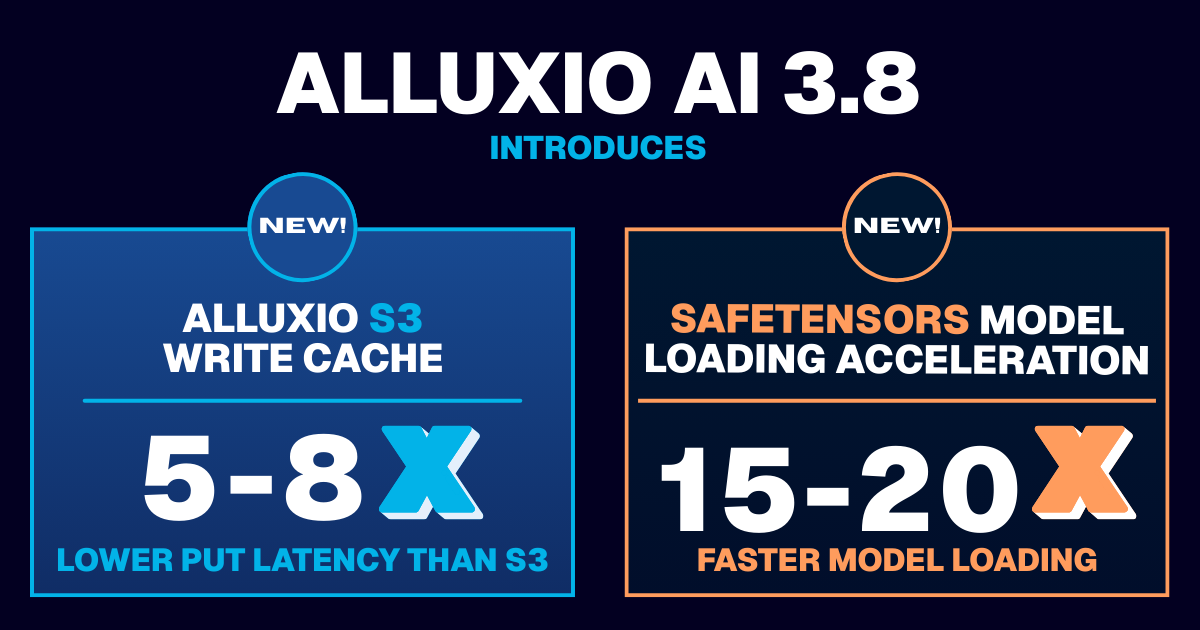

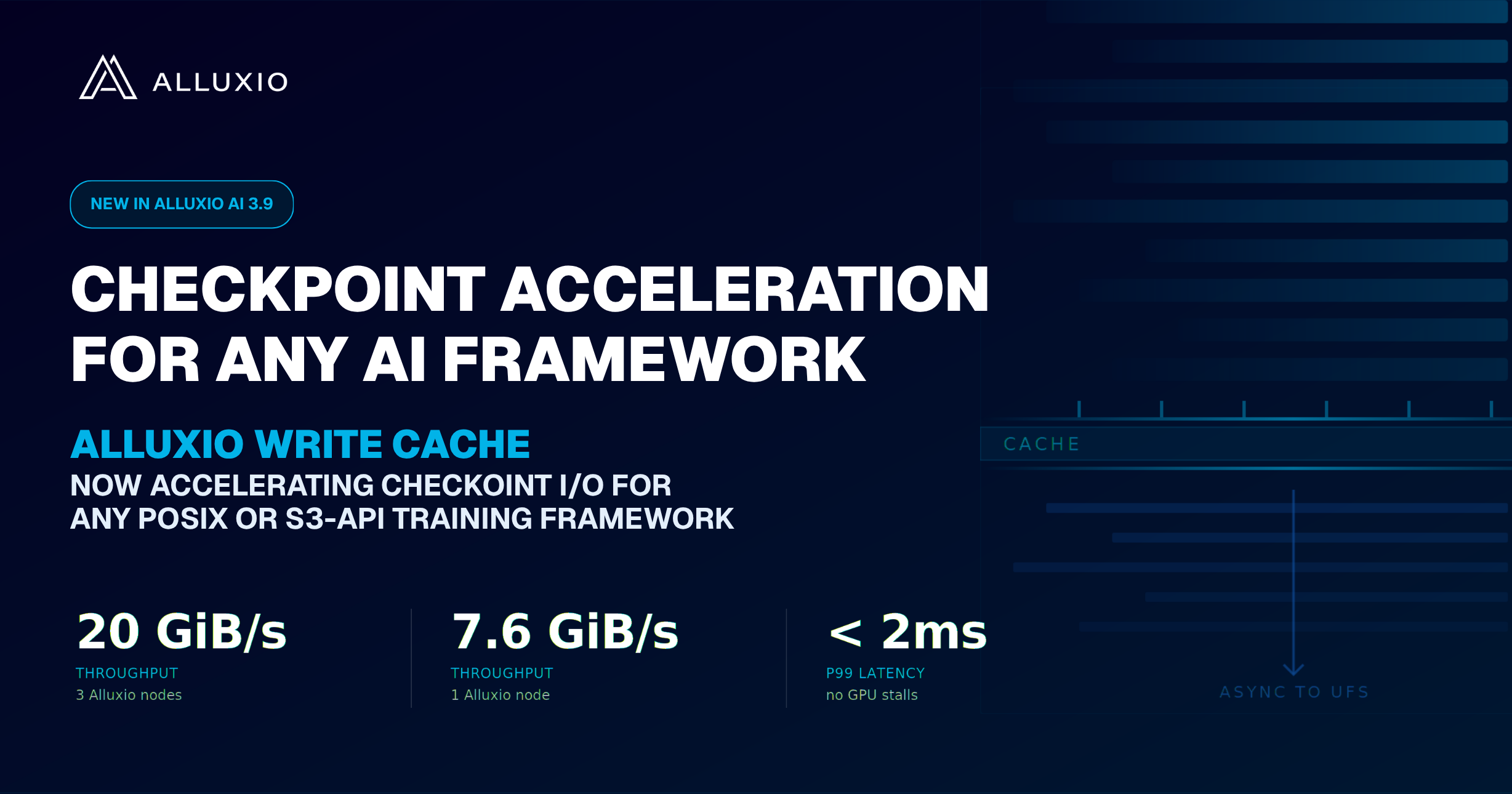

Learn about the new features in Alluxio AI 3.8 designed to eliminate two of the most painful bottlenecks in modern AI pipelines. Introducing Alluxio S3 Write Cache, which dramatically reduces object store write latency and improves write-heavy workload performance, and Safetensors Model Loading Acceleration that delivers near-local NVMe throughput for model weight loading

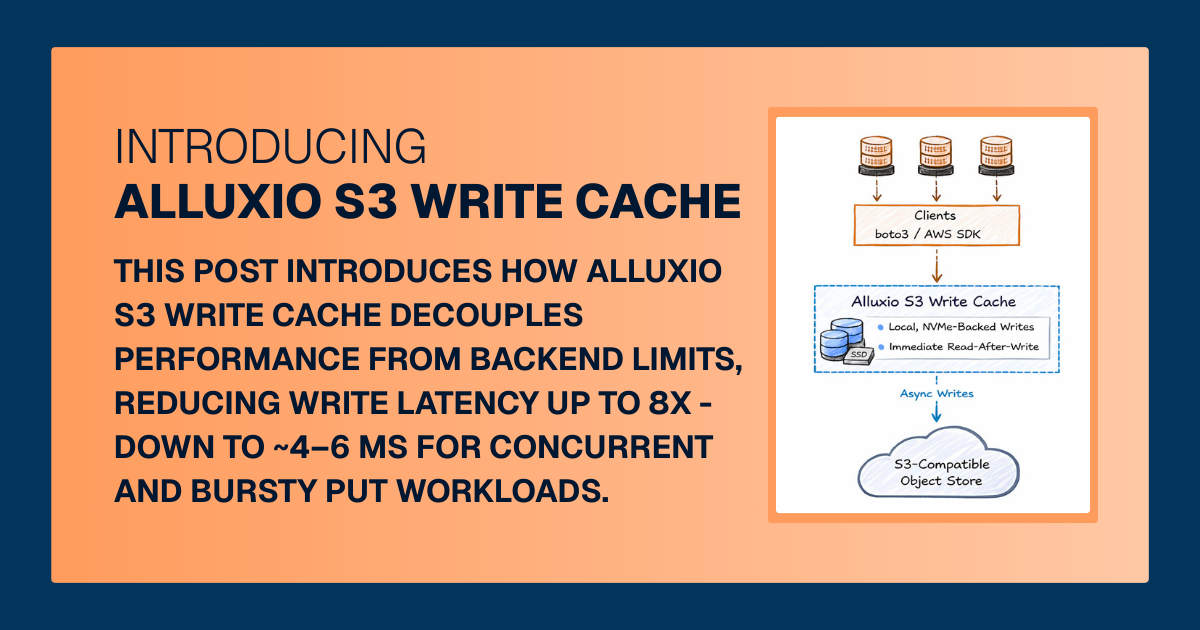

For write-heavy AI and analytics workloads, cloud object storage can become the primary bottleneck. This post introduces how Alluxio S3 Write Cache decouples performance from backend limits, reducing write latency up to 8X - down to ~4–6 ms for concurrent and bursty PUT workloads.

.png)

Xi Chen, Senior Software Engineer at Tencent & Top 100 Alluxio open source project contributor, explains the block allocation policy of Alluxio at the code level.

This blog was originally published on the website of NetApp: https://www.netapp.com/blog/modernize-analytics-workloads-netapp-alluxio/

Imagine as an IT leader having the flexibility to choose any services that are available in public cloud and on premises. And imagine being able to scale your storage for your data lakes with control over data locality and protection for your organization. With these goals in mind, NetApp and Alluxio are joining forces to help our customers adapt to new requirements for modernizing data architecture with low-touch operations for analytics, machine learning, and artificial intelligence workflows.

.jpeg)

In the previous blog, we introduced Uber’s Presto use cases and how we collaborated to implement Alluxio local cache to overcome different challenges in accelerating Presto queries. The second part discusses the improvements to the local cache metadata.

.jpeg)

This article shares how Uber and Alluxio collaborated to design and implement Presto local cache to reduce HDFS latency.

.jpeg)

This article introduces the design and implementation of metadata storage in Alluxio Master, either on heap and off heap (based on RocksDB).

.jpeg)

Raft is an algorithm for state machine replication as a way to ensure high availability (HA) and fault tolerance. This blog shares how Alluxio has moved to a Zookeeper-less, built-in Raft-based journal system as a HA implementation.

.jpeg)

With machine learning (ML) and artificial intelligence (AI) applications becoming more business-critical, organizations are in the race to advance their AI/ML capabilities. To realize the full potential of AI/ML, having the right underlying machine learning platform is a prerequisite.

.jpeg)

This article will discuss a new solution to orchestrating data for end-to-end machine learning pipelines that addresses the above questions. I will outline common challenges and pitfalls, followed by proposing a new technique, data orchestration, to optimize the data pipeline for machine learning.

.jpeg)