Blog

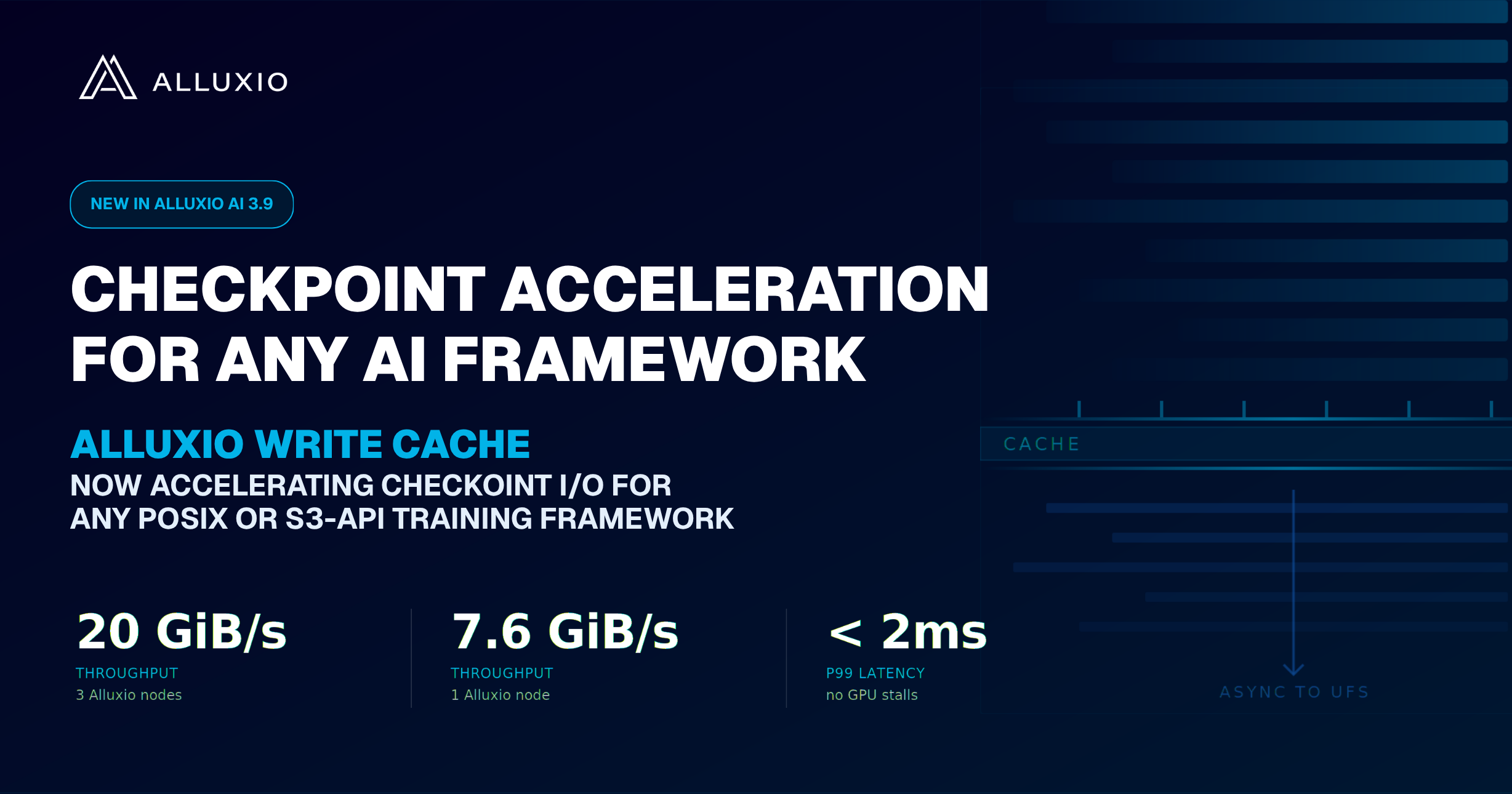

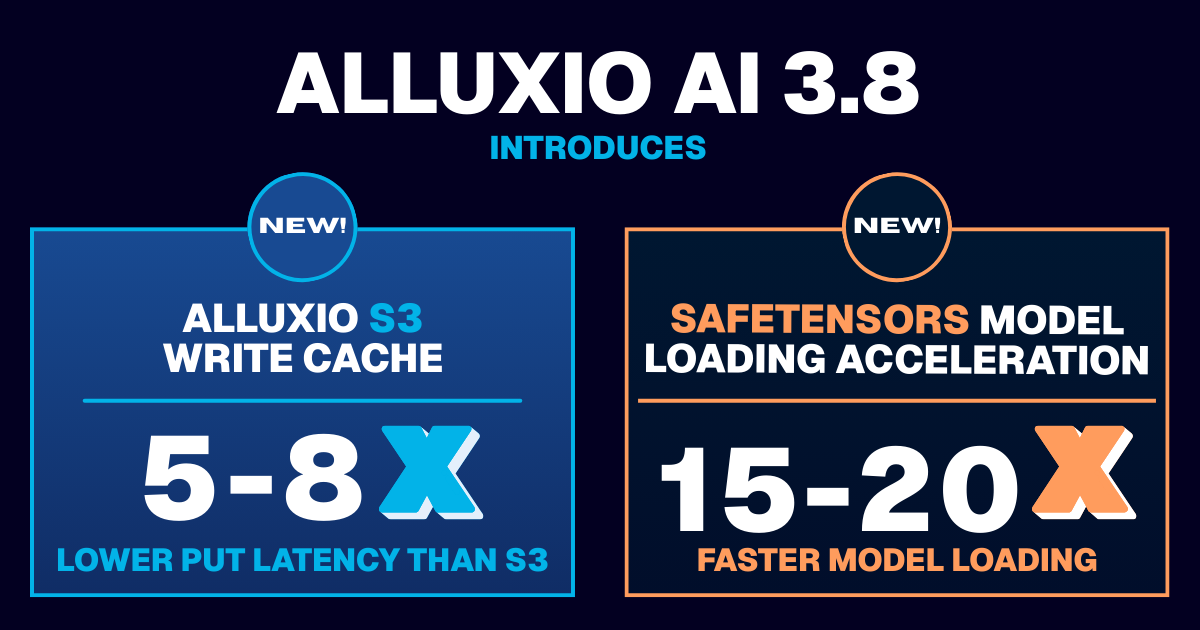

Learn about the new features in Alluxio AI 3.8 designed to eliminate two of the most painful bottlenecks in modern AI pipelines. Introducing Alluxio S3 Write Cache, which dramatically reduces object store write latency and improves write-heavy workload performance, and Safetensors Model Loading Acceleration that delivers near-local NVMe throughput for model weight loading

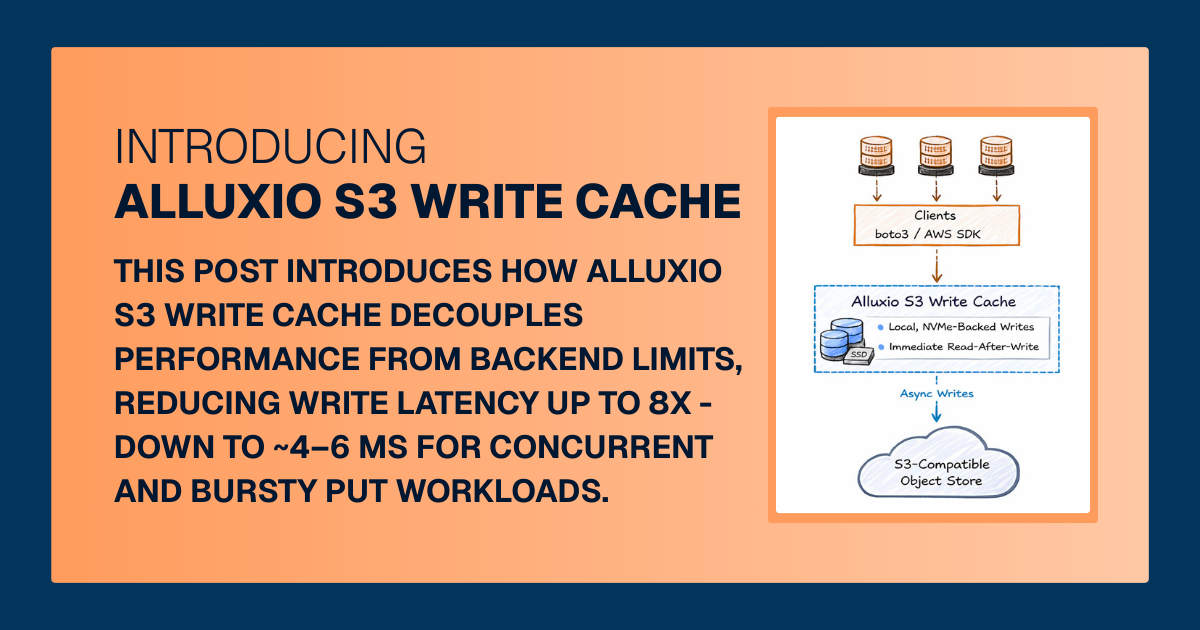

For write-heavy AI and analytics workloads, cloud object storage can become the primary bottleneck. This post introduces how Alluxio S3 Write Cache decouples performance from backend limits, reducing write latency up to 8X - down to ~4–6 ms for concurrent and bursty PUT workloads.

.png)

.jpeg)

As we step into 2024, we look back and celebrate an incredible year of 2023 for the Alluxio community.

First and foremost, thank you to all of our contributors and the broader community! Together, we have achieved remarkable milestones. 💖

.jpeg)

In this blog, we discuss the importance of data locality for efficient machine learning on the cloud. We examine the pros and cons of existing solutions and the tradeoff between reducing costs and maximizing performance through data locality. We then highlight the new-generation Alluxio design and implementation, detailing how it brings value to model training and deployment. Finally, we share lessons learned from benchmarks and real-world case studies.

This article was initially posted on datanami.

The paradigm shift ushered in by Artificial Intelligence (AI) in today’s business and technological landscapes is nothing short of revolutionary. AI’s potential to transform traditional business models, optimize operations, and catalyze innovation is vast. But navigating its complexities can be daunting. Organizations must understand and adhere to some foundational principles to ensure AI initiatives lead to sustainable success. Let’s delve deeper into these ten evergreen principles:

.jpeg)

In this blog, we discuss the data access challenges in AI and why commonly used NAS/NFS may not be a good option for your organization.

.jpeg)

Alluxio, the data platform company for all data-driven workloads, hosted the community event “AI Infra Day” on October 25, 2023. This virtual event brought together technology leaders working on AI infrastructure from Uber, Meta, and Intel, to delve into the intricate aspects of building scalable, performant, and cost-effective AI platforms.

.jpeg)

This article was initially posted on Solutions Review.

Artificial Intelligence (AI) has consistently been in the limelight as the precursor of the next technological era. Its limitless applications, ranging from simple chatbots to intricate neural networks capable of deep learning, promise a future where machines understand and replicate complex human processes. Yet, at the heart of this technological marvel is something foundational yet often overlooked: data.

.jpeg)

This article was initially posted on ITOpsTimes.

Unless you’ve been living off the grid, the hype around Generative AI has been impossible to ignore. A critical component fueling this AI revolution is the underlying computing power, GPUs. The lightning-fast GPUs enable speedy model training. But a hidden bottleneck can severely limit their potential – I/O. If data can’t make its way to the GPU fast enough to keep up with its computations, those precious GPU cycles end up wasted waiting around for something to do. This is why we need to bring more awareness to the challenges of I/O bottlenecks.