In a recent blog, we discussed the ideation, design and new features in Alluxio 2.0 preview. Today we are thrilled to announce another new revolutionary project that the Alluxio engineering team has been hard at work on for the past year - the Alluxio Virtual Reality (VR) client.

One of the biggest obstacles for new Alluxio users is the steep learning curve associated with becoming an expert Alluxio administrator. The Alluxio VR client vastly reduces the time required for a user to become familiar with inspecting and manipulating the Alluxio namespace. By providing users with a client interface that mimics reality, new users can leverage their life experiences to quickly ramp up.

Examples

Exploring a file system namespace is as simple as approaching the root of the Alluxio cluster and climbing through the file system tree and traversing the appropriate branches until the desired leaf node is reached.

For climbing, we support two different modes - Free Solo and assisted climb. For those looking for an adrenaline fuelled adventure, we recommend Free Solo. If you fall off the tree in Free Solo mode, you will become an orphaned block destined to wander the VR experience until housekeeping finds you. The assisted climbing mode allows for more rapid traversal through the tree but requires some learning to become familiar with the assistance interface.

Deleting files from the file system is also easy, simply climb to the appropriate branch, and then remove the desired leaves from the branch. Users that delete often or many files at once can consider using the recursive chainsaw utility to quickly trim down large branches or many leaves. Users can also hire various herbivores to assist in the automated removal of leaves; please refer to the Zookeeper manual for more details.

Mounting an external storage like S3 into Alluxio is supported by growing and connecting a new tree to the existing root tree. Note that, the S3 or in general object store trees will not grow tall as the object storages are not real file systems but having a “flat” keyspace.

In Alluxio 2.0 preview, a new job service framework is added as a distributed computation framework for I/O workloads. We ported this job service API to the VR client too so that a user can hire and instruct a group of monkeys to work on the trees to complete tasks like moving leaves (files) across branches or replicating the same leaves.

Integrations & Ecosystem

The Alluxio VR client is implemented through a pluggable interface. Currently, only the native Alluxio VR client is integrated with Alluxio, but we anticipate popular VR devices to quickly pick up the open source drivers for browsing Alluxio namespaces.

We are also working on integrating this VR client to support more bigdata applications like Apache Spark and Presto, so data scientists and engineers can easily define and run analytics jobs using a VR client in their “trees”.

Summary

Alluxio VR client is the whole new way to enable easier and more efficient interaction with data, no matter the data is stored in on-premise, public cloud or hybrid cloud environment, and no matter data scientists and developers are at home, kitchen, bus stop or gym.

.png)

Blog

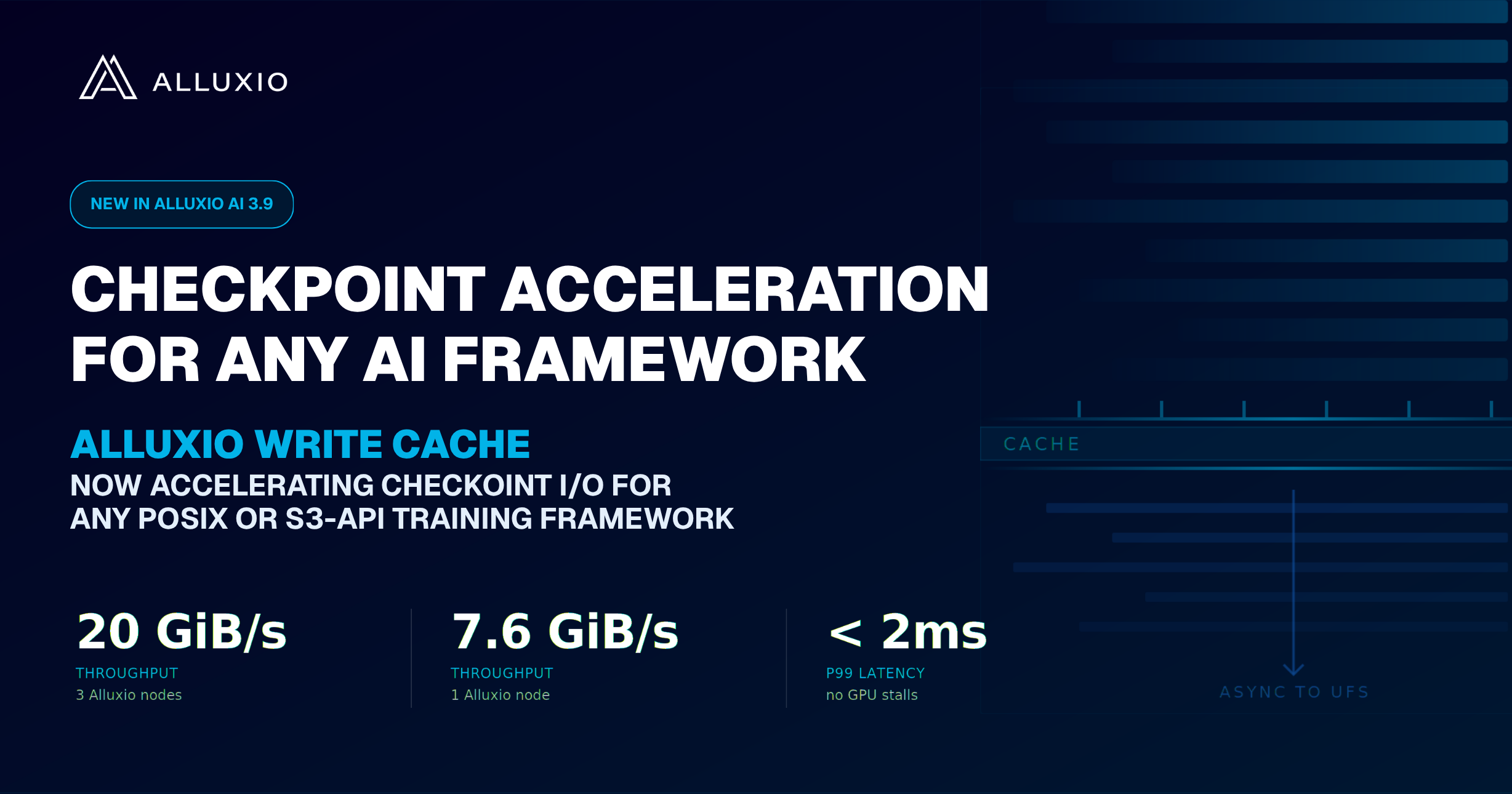

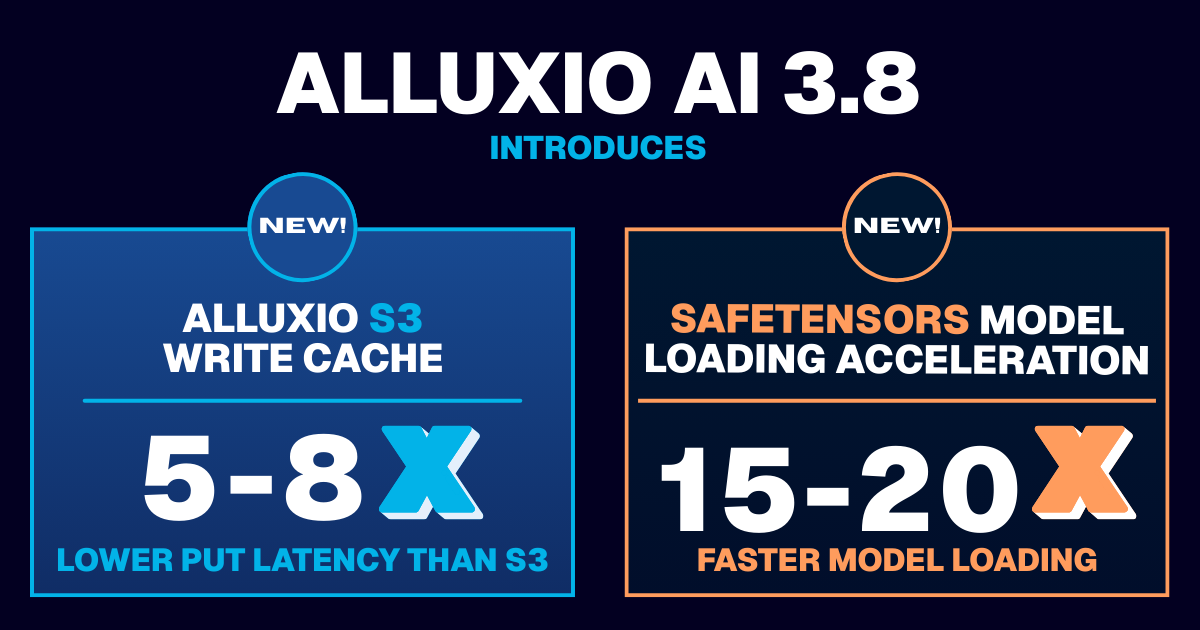

Learn about the new features in Alluxio AI 3.8 designed to eliminate two of the most painful bottlenecks in modern AI pipelines. Introducing Alluxio S3 Write Cache, which dramatically reduces object store write latency and improves write-heavy workload performance, and Safetensors Model Loading Acceleration that delivers near-local NVMe throughput for model weight loading

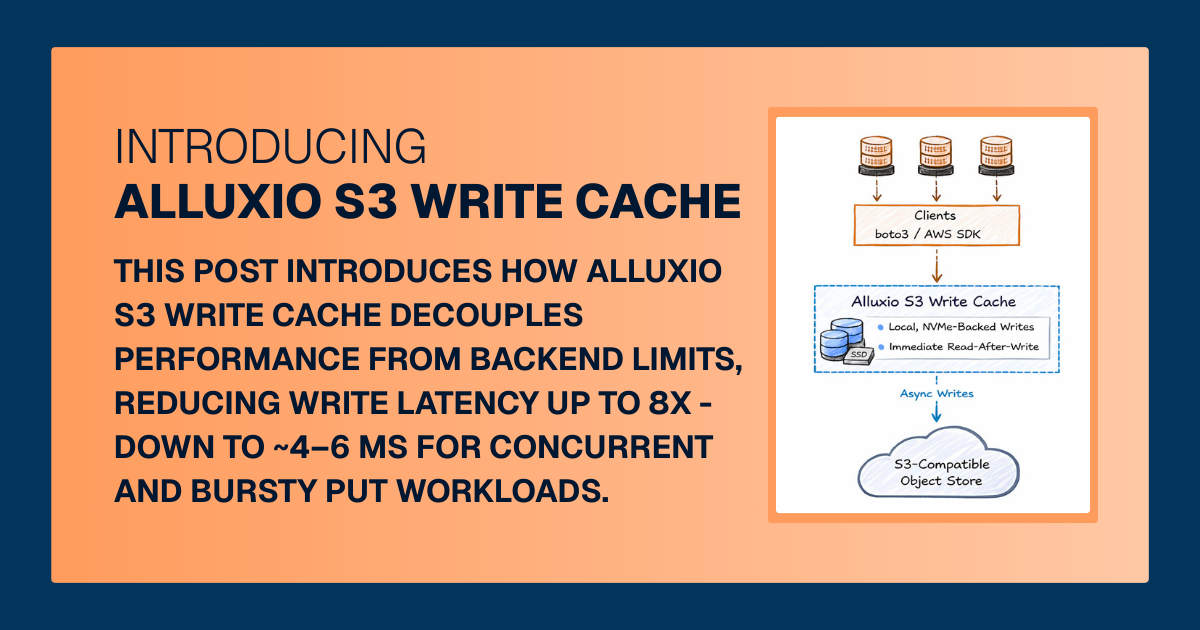

For write-heavy AI and analytics workloads, cloud object storage can become the primary bottleneck. This post introduces how Alluxio S3 Write Cache decouples performance from backend limits, reducing write latency up to 8X - down to ~4–6 ms for concurrent and bursty PUT workloads.