Alluxio is a proud sponsor and exhibitor at the AWS Summit in New York. If you weren't able to attend, here are the highlights!

What's AWS Summit? It's a one day conference bringing together the cloud computing community to engage on topics ranging from Machine Learning, Hybrid Cloud, Big Data & Analytics, and more.

What we learned

- Enterprises are advancing along the cloud journey, and data access has become a prevalent challenge, because enterprises adopting hybrid cloud are not able to efficiently access data on-premise and in their public cloud.

- Amazon's CTO Werner Vogels talks about AWS Outposts in his keynote. Amazon's investment in Outposts is another indication of the rise of hybrid cloud.

- Cloud applications are heavily weighed towards data driven applications such as analytics and machine learning applications.

Announcements

We were thrilled to have announced the release of Alluxio 2.0 at the Summit! Alluxio 2.0 is the largest release since inception with over 900 PRs as well as many new features to continue building on to our data orchestration approach for the cloud.

Learn more: Orchestrating Data for the Cloud World with Alluxio 2.0 , Download Alluxio

Thanks to everyone for stopping by the Alluxio booth and the great conversations!

.png)

Blog

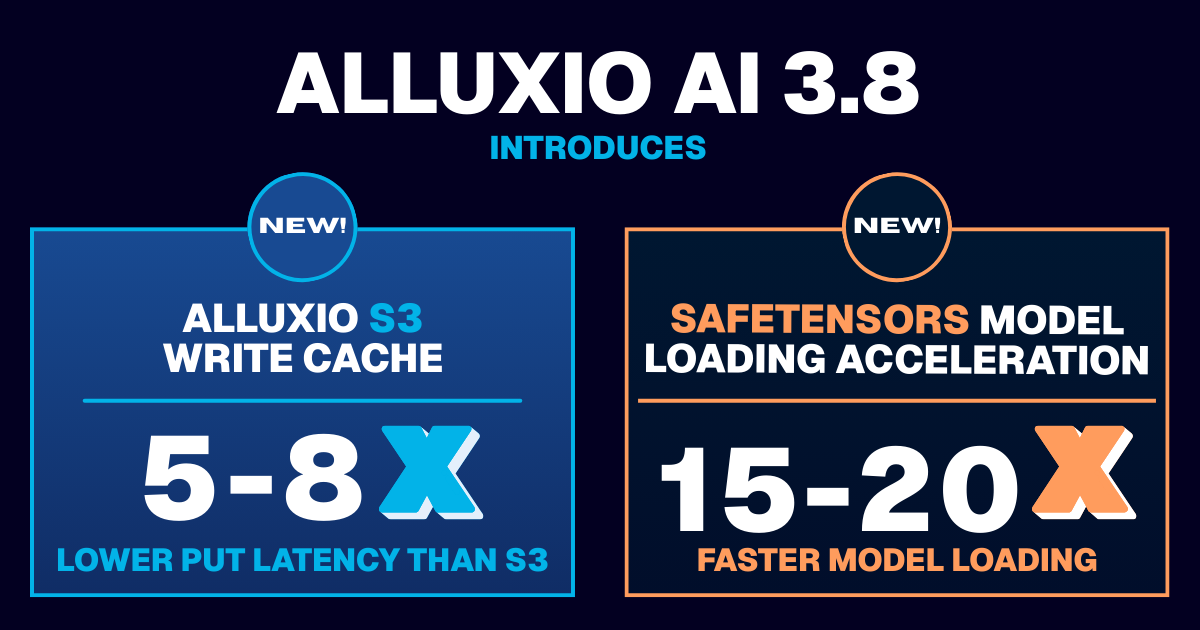

Learn about the new features in Alluxio AI 3.8 designed to eliminate two of the most painful bottlenecks in modern AI pipelines. Introducing Alluxio S3 Write Cache, which dramatically reduces object store write latency and improves write-heavy workload performance, and Safetensors Model Loading Acceleration that delivers near-local NVMe throughput for model weight loading

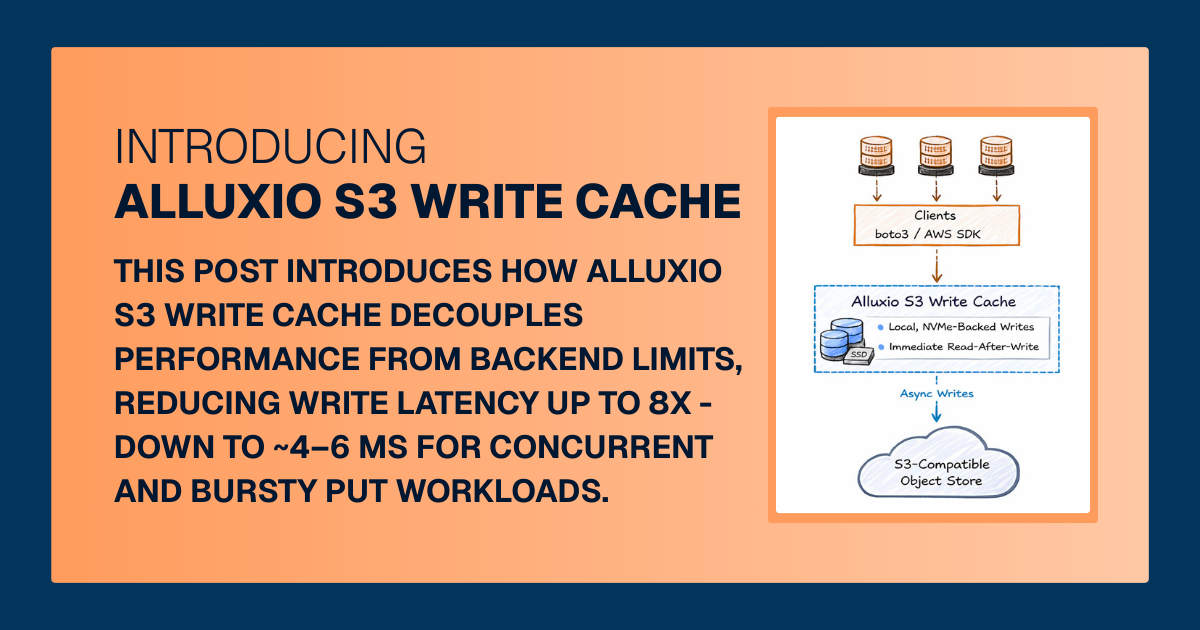

For write-heavy AI and analytics workloads, cloud object storage can become the primary bottleneck. This post introduces how Alluxio S3 Write Cache decouples performance from backend limits, reducing write latency up to 8X - down to ~4–6 ms for concurrent and bursty PUT workloads.

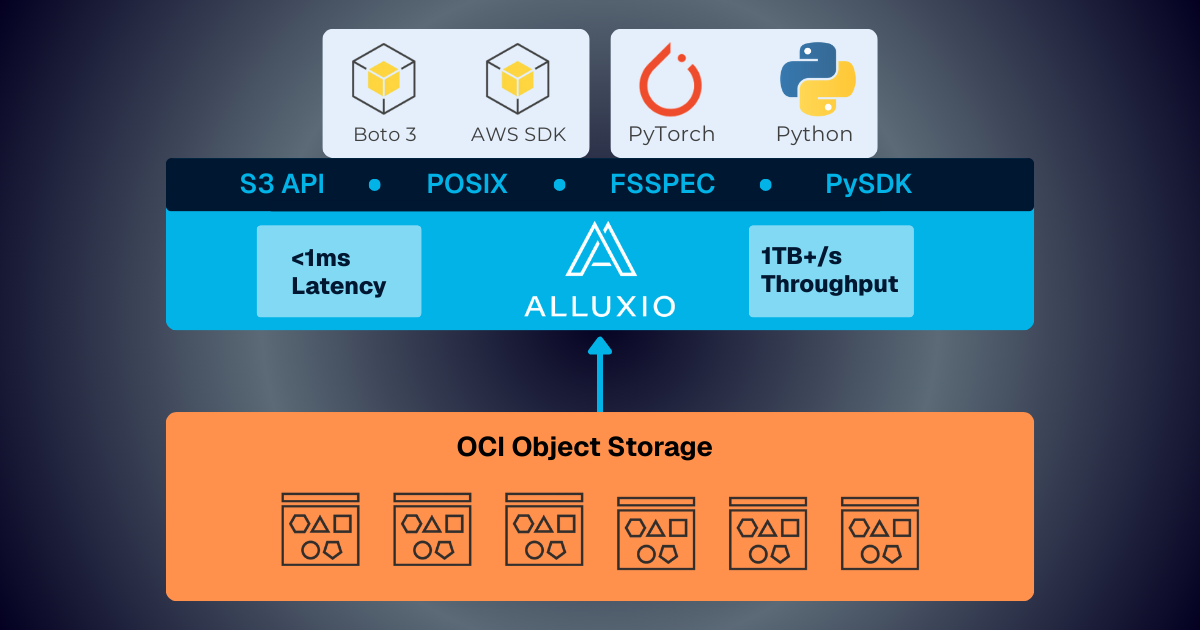

Oracle Cloud Infrastructure has published a technical solution blog demonstrating how Alluxio on Oracle Cloud Infrastructure (OCI) delivers exceptional performance for AI and machine learning workloads, achieving sub-millisecond average latency, near-linear scalability, and over 90% GPU utilization across 350 accelerators.