Alluxio, formerly Tachyon, began as a research project at UC Berkeley’s AMPLab in 2012. This year we announced the 1.0 release of Alluxio, the world’s first memory speed virtual distributed storage system, which unifies data access and bridges computation frameworks and underlying storage systems. We have been working closely with the Alluxio community on realizing the vision of Alluxio to become the de facto storage unification layer for big data and other scale out application environments. Today, we’re excited to announce that the Alluxio open source project is adopting the Benevolent Dictator For Life (BDFL) model. The day-to-day management of the project will be carried out by the Project Management Committee (PMC). Within the PMC, there are Maintainers, who are responsible for upholding the quality of the code in their respective components. You can find the list of initial PMC members and maintainers here. As the co-creator of the Alluxio open source project, I have been shepherding the project since open-sourcing it in 2013, and I will assume the role of the BDFL. The Alluxio community is growing faster than ever. With the adoption of a project management mechanism, we believe it will further accelerate the project growth and enable contributors around the world to easily collaborate to bring exciting new features and improvements to Alluxio. If you would like to join the Alluxio community, you can take your first step here.

.png)

Blog

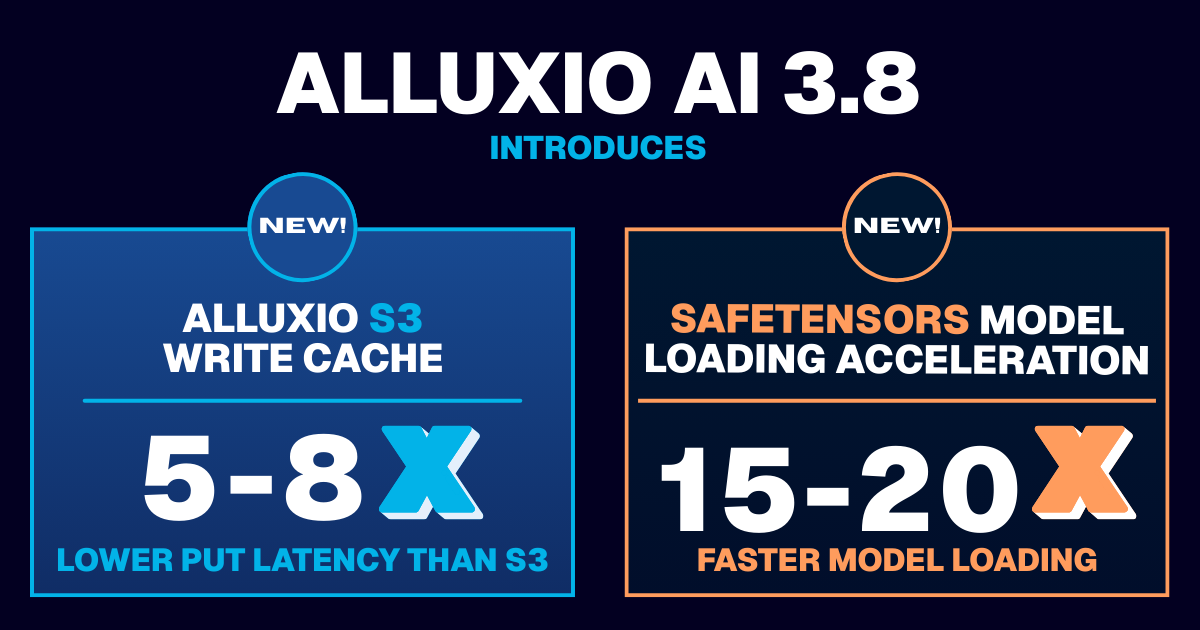

Learn about the new features in Alluxio AI 3.8 designed to eliminate two of the most painful bottlenecks in modern AI pipelines. Introducing Alluxio S3 Write Cache, which dramatically reduces object store write latency and improves write-heavy workload performance, and Safetensors Model Loading Acceleration that delivers near-local NVMe throughput for model weight loading

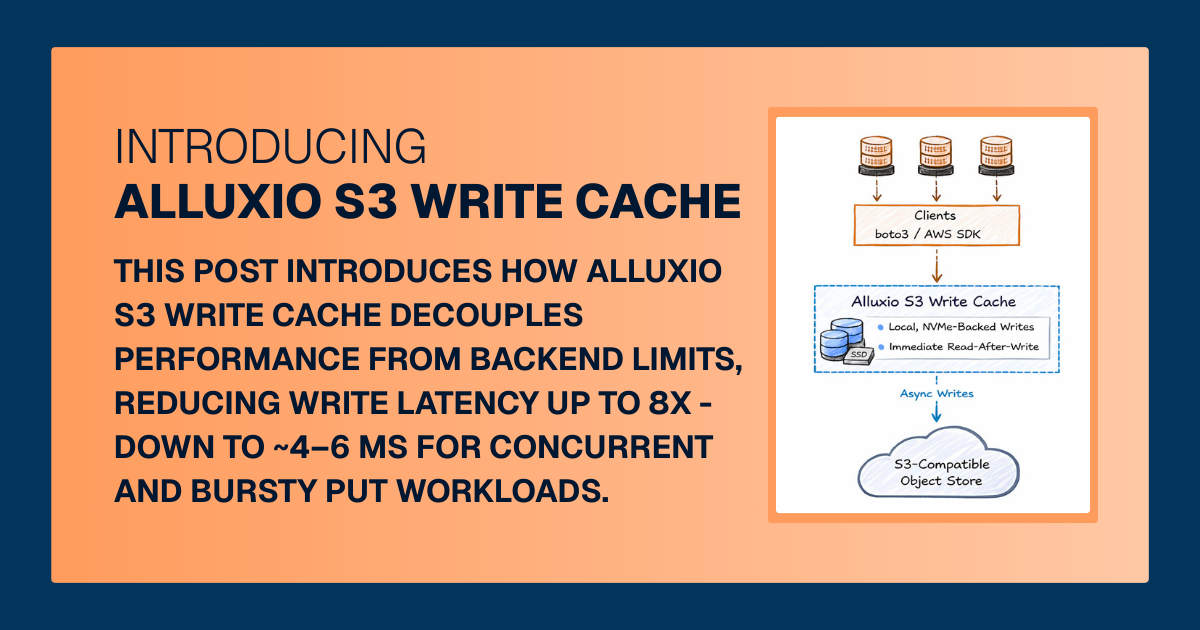

For write-heavy AI and analytics workloads, cloud object storage can become the primary bottleneck. This post introduces how Alluxio S3 Write Cache decouples performance from backend limits, reducing write latency up to 8X - down to ~4–6 ms for concurrent and bursty PUT workloads.

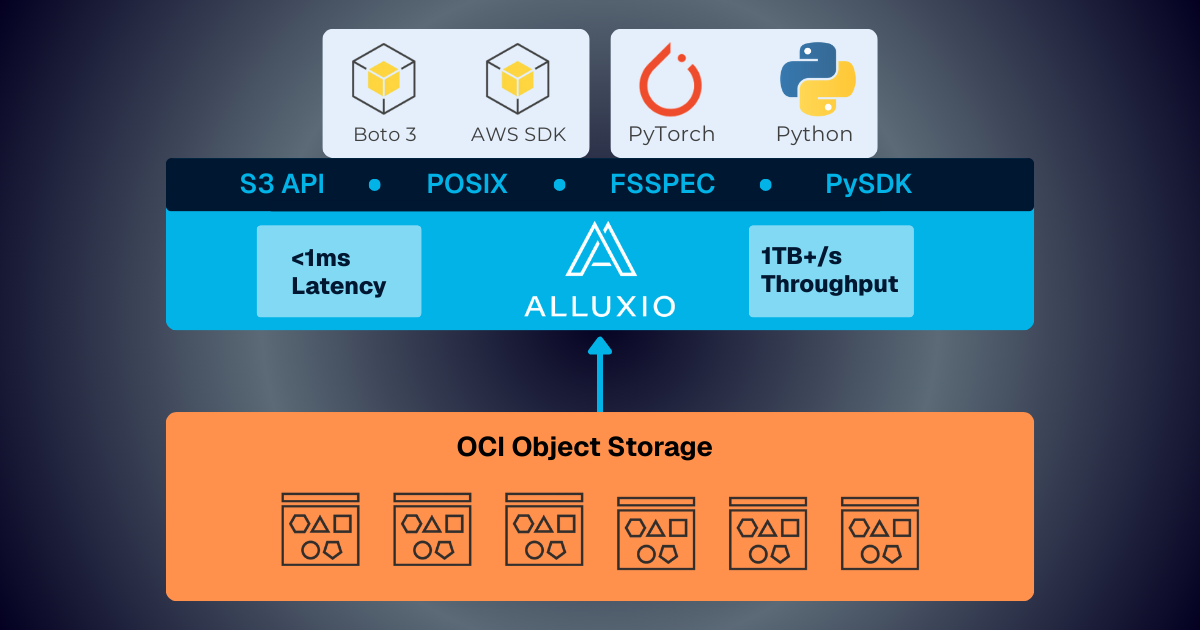

Oracle Cloud Infrastructure has published a technical solution blog demonstrating how Alluxio on Oracle Cloud Infrastructure (OCI) delivers exceptional performance for AI and machine learning workloads, achieving sub-millisecond average latency, near-linear scalability, and over 90% GPU utilization across 350 accelerators.