From time to time, a question pops up on the user mailing list referencing job failures with the error message "java.lang.ClassNotFoundException: Class alluxio.hadoop.FileSystem not found". This post explains the reason for the failure and the solution to the issue when it occurs.

Why does this happen?

This error indicates the Alluxio client is not available at runtime. This causes an exception when the job tries to access the Alluxio filesystem but fails to find the implementation of Alluxio client to connect to the service.

An Alluxio client is a Java library and defines the class alluxio.hadoop.FileSystem to invoke Alluxio services per user requests (such as creating a file, listing a directory, etc). It is typically pre-compiled into a jar file named alluxio-1.8.1-client.jar (for v1.8.1), and distributed with the Alluxio tarball. To work with applications this file should be located on the JVM classpath so that it can be discovered and loaded into the JVM process. If the application JVM fails to find this file on the classpath, it does not know the implementation of class alluxio.hadoop.FileSystem and will therefore throw the exception.

How to address this problem

The solution is to ensure the Alluxio client jar is distributed on the classpath of applications. There are several factors that should be considered when troubleshooting.

If the application is distributed across multiple nodes, this jar should be distributed to all these nodes. Depending on the compute framework, this configuration can be very different:

- For MapReduce or YARN applications, one can append the path to Alluxio client jar to

mapreduce.application.classpathoryarn.application.classpathto ensure each task can find it. Alternatively, you can supply the path as argument of-libjarslike$ bin/hadoop jar \

libexec/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar wordcount \-libjars /<PATH_TO_ALLUXIO>/client/alluxio-1.8.1-client.jar \

<INPUT FILES> <OUTPUT DIRECTORY>

Depending on the Hadoop distribution, it may also help to set$HADOOP_CLASSPATH:export HADOOP_CLASSPATH=/<PATH_TO_ALLUXIO>/client/alluxio-1.8.1-client.jar:${HADOOP_CLASSPATH} - For Spark applications, set in

spark/conf/spark-defaults.confon every node running Spark and restart the long-running Spark server processes:spark.driver.extraClassPath /<PATH_TO_ALLUXIO>/client/alluxio-1.8.1-client.jarspark.executor.extraClassPath /<PATH_TO_ALLUXIO>/client/alluxio-1.8.1-client.jar - For Hive, set environment variable

HIVE_AUX_JARS_PATHinconf/hive-env.sh:export HIVE_AUX_JARS_PATH=/<PATH_TO_ALLUXIO>/client/alluxio-1.8.1-client.jar:${HIVE_AUX_JARS_PATH}

In some cases, one compute framework relies on another. For example, a Hive service can use MapReduce as the engine for distributed query. In this case it is necessary to set classpath for both Hive and MapReduce to be configured correctly.

Summary

- For applications to work with Alluxio, they must append the Alluxio client jar file into their classpath.

- How to configure Alluxio client jar file to the classpath can be case-by-case based on the compute framework.

.png)

Blog

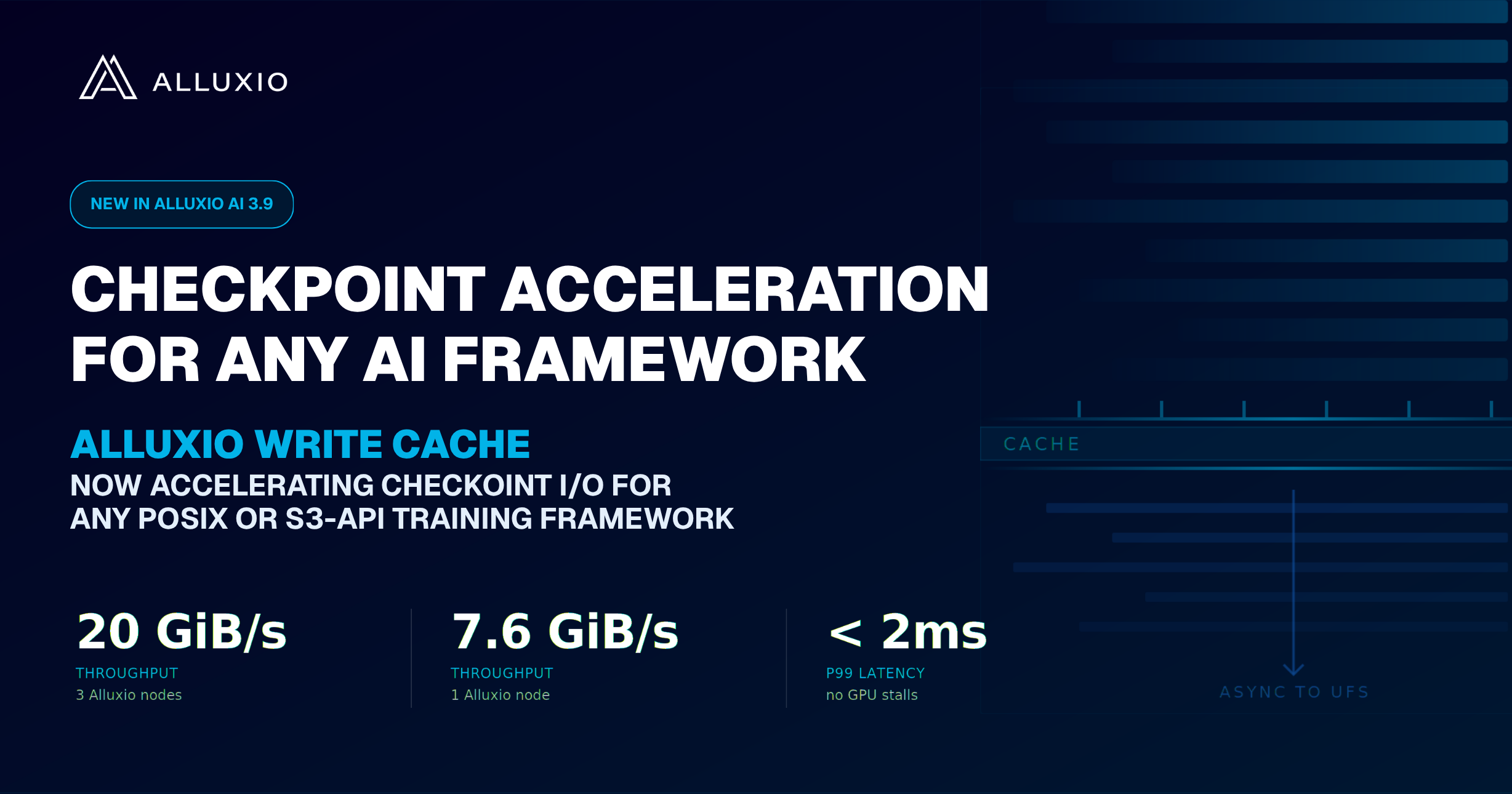

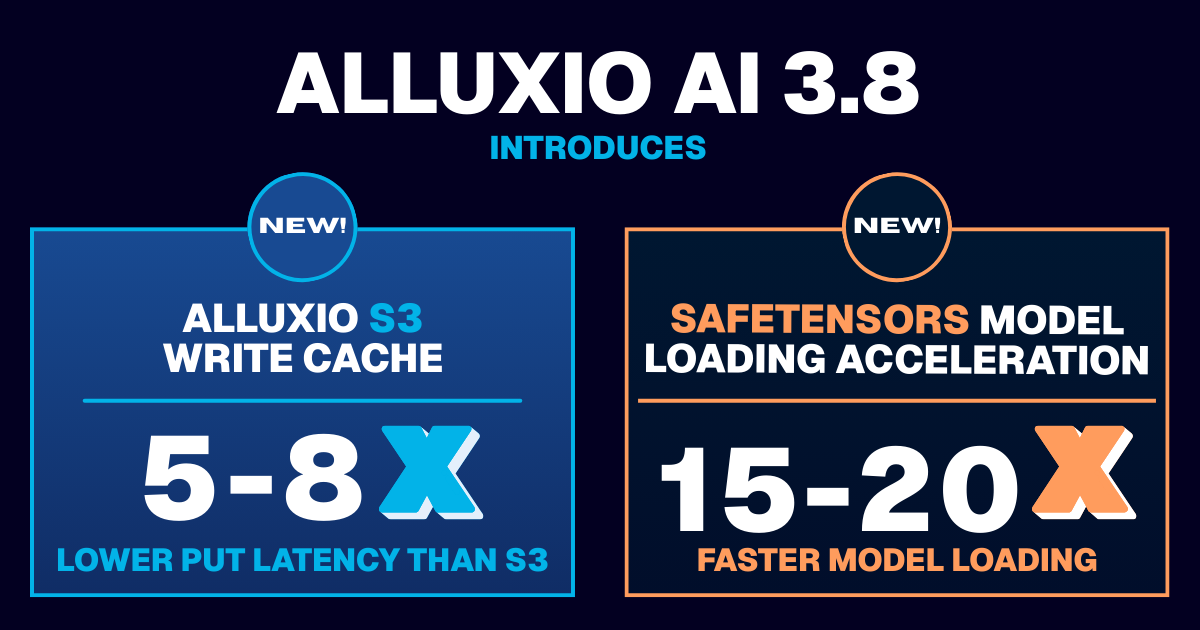

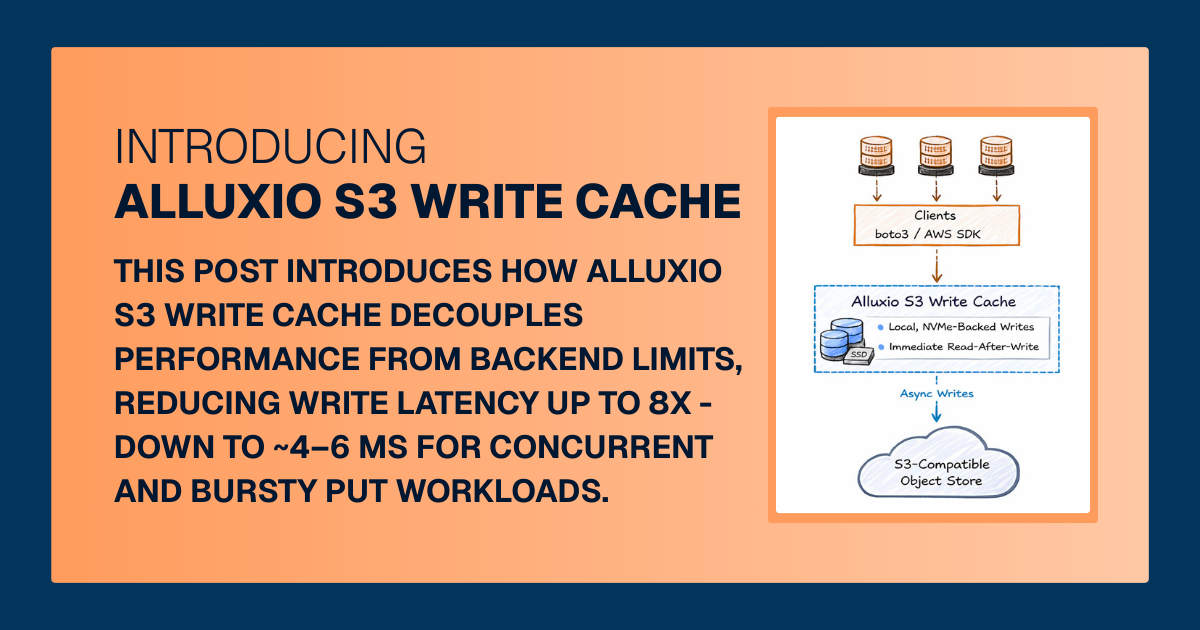

Learn about the new features in Alluxio AI 3.8 designed to eliminate two of the most painful bottlenecks in modern AI pipelines. Introducing Alluxio S3 Write Cache, which dramatically reduces object store write latency and improves write-heavy workload performance, and Safetensors Model Loading Acceleration that delivers near-local NVMe throughput for model weight loading

For write-heavy AI and analytics workloads, cloud object storage can become the primary bottleneck. This post introduces how Alluxio S3 Write Cache decouples performance from backend limits, reducing write latency up to 8X - down to ~4–6 ms for concurrent and bursty PUT workloads.