On September 13th, we held our firstNew York City Alluxio Meetup!Work-Benchwas very generous for hosting the Alluxio meetup in Manhattan. This was the first US Alluxio meetup outside of the Bay Area, so it was extremely exciting to get to meet Alluxio enthusiasts on the east coast! The meetup focused on users of Alluxio with different applications from Hive and Presto. As an introduction, Haoyuan Li (creator and founder of Alluxio) and Bin Fan (founding engineer of Alluxio) gave an overview of Alluxio and the new features and enhancements of the new v1.8.0 release. Next, Tao Huang and Bing Bai fromJD.com, one of the largest e-commerce companies in China, shared how they have been running Presto and Alluxio in production for almost a year. Their big data platform is running Alluxio on over 100 machines, and can achieve speed ups of over 10x. They also discussed their open source contributions to the Alluxio community and their plans for future work. Thai Bui fromBazaarvoice, a digital marketing company in Texas, presented how they effectively cache S3 data with Alluxio for Hive queries. By using Alluxio to serve their S3 data, they experienced 5x-10x speedups in their Hive queries. The talk slides are online:

- Alluxio: An overview and what's new in 1.8 (Haoyuan Li, Bin Fan)

- Using Alluxio as a fault-tolerant pluggable optimization component of JD.com's compute frameworks (Tao Huang and Bing Bai)

- Hybrid collaborative tiered-storage with Alluxio (Thai Bui)

We had a great time learning more about Alluxio use cases, and interacting with Alluxio users on the east coast! We look forward to the next chance to hold another NYC Alluxio meetup!

.png)

Blog

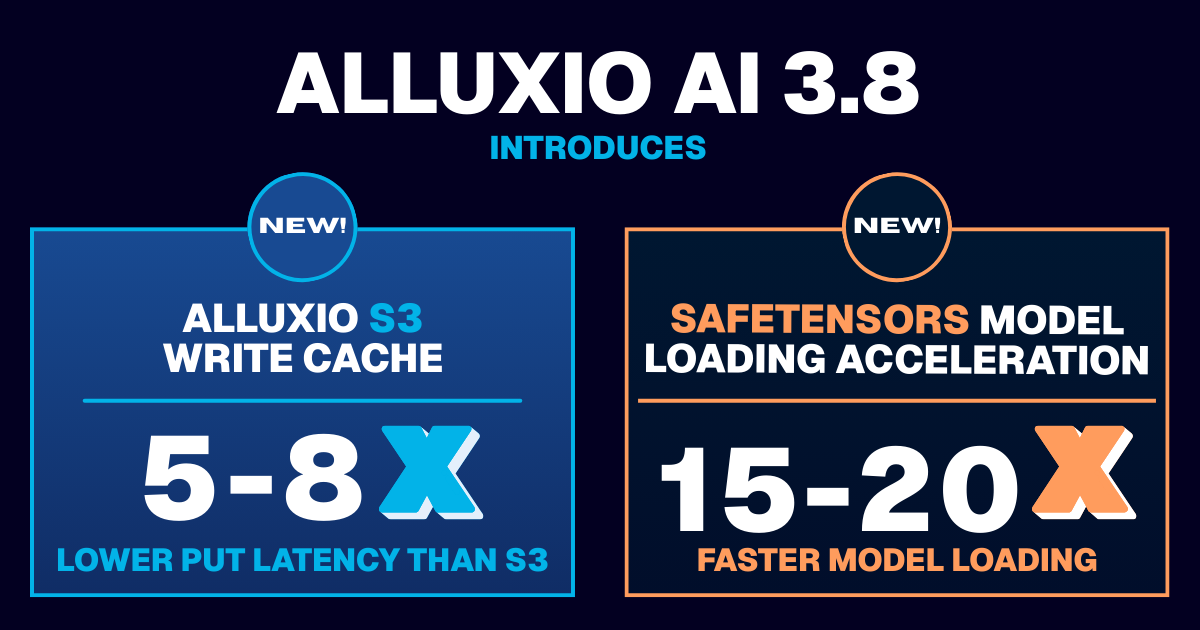

Learn about the new features in Alluxio AI 3.8 designed to eliminate two of the most painful bottlenecks in modern AI pipelines. Introducing Alluxio S3 Write Cache, which dramatically reduces object store write latency and improves write-heavy workload performance, and Safetensors Model Loading Acceleration that delivers near-local NVMe throughput for model weight loading