RedNote Accelerates Model Training & Deployment with Alluxio

RedNote’s AI story: 41% training time reduction, 10x faster model download speed, and 80% model deployment cost savings. See how Alluxio made this happen.

Challenge

Deploying AI models often triggers cold starts—where models must be downloaded from remote object storage before they can serve inference. This results in frustrating delays, especially when scaling across many nodes or deploying large models.

Slow Model Distribution

Slow Inference Cold Starts

Redundant and Inefficient Data Path

Rising Data Transfer and Storage Costs

Your models are getting bigger.

Your pipelines don't have to get slower.

Solution: Alluxio as a Distributed Caching Layer

Alluxio AI acts as a high-performance caching layer that stores model binaries, weights, and dependencies closer to compute. Whether you're spinning up new inference nodes, deploying across regions, or managing multiple models, Alluxio AI ensures models are always ready to run.

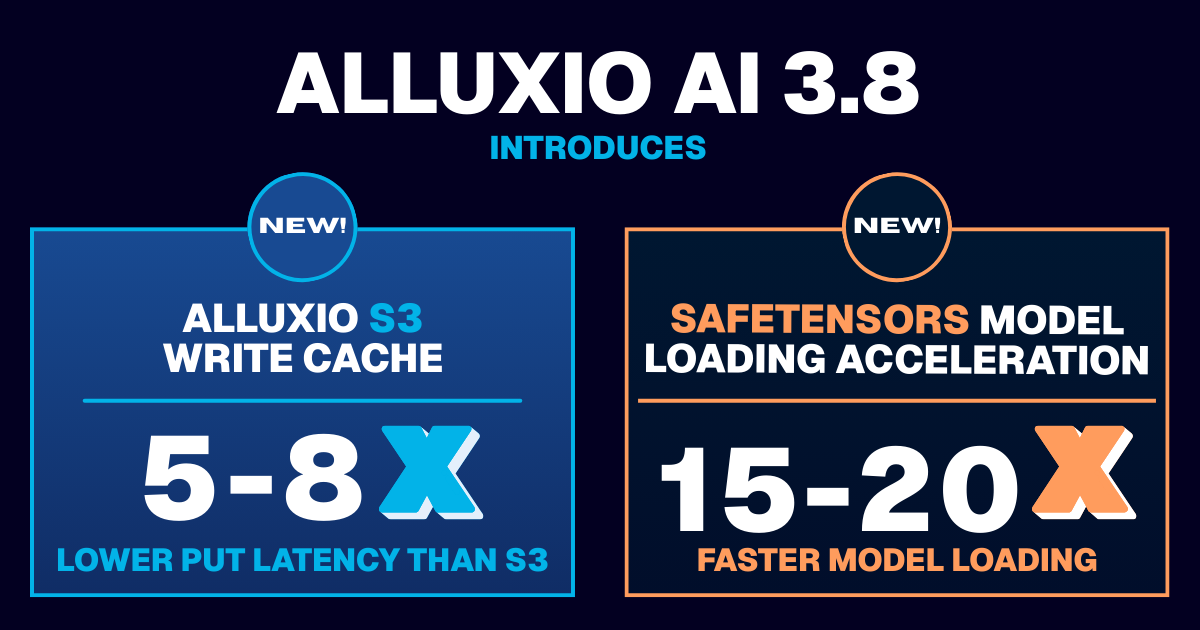

6-12x improvement in LLM cold start performance with freedom from cloud vendor lock-in

Gain up to 10x faster model download speeds and 80%+ faster model deployment times

Slash cloud costs and avoid redundant transfers with a software-only solution that augments your existing storage

Request a demo to learn about how Alluxio can help your AI use case.

Why Alluxio for AI

Unlike legacy distributed file systems or general-purpose storage solutions, Alluxio is:

Caching, Not Storage

AI Native

Cloud and Storage Agnostic

Transparent & Developer Friendly

Not another Lustre, Ceph, or Weka.

Alluxio AI brings caching to the core of your existing AI data pipelines.

.png)