Dyna Robotics Turbocharges Foundation Model Training

Dyna Robotics, a cutting-edge robotics company, improved its foundation model training performance by deploying Alluxio as a distributed caching and data access layer.

Challenge

Training large AI models requires feeding data to GPUs at scale. But object stores and data lakes aren’t built for the throughput or low latency AI workloads demand. Every I/O bottleneck slows down training jobs, wastes GPU cycles, and delays iteration.

Training Delays

Severe I/O Starvation

Redundant and Inefficient Data Path

Rising Data Transfer and Storage Costs

Your models are getting bigger.

Your pipelines don't have to get slower.

Solution: Alluxio as a Distributed Caching Layer

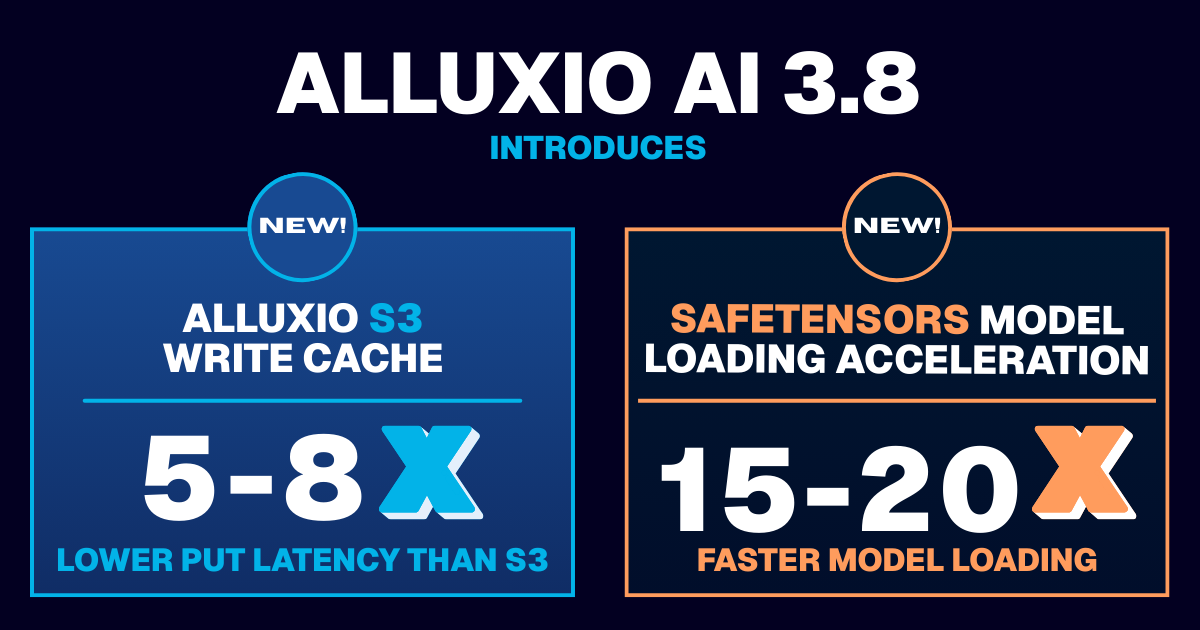

Alluxio Enterprise AI provides high-performance data access that intelligently manages data locality and caching across distributed environments.

Boost GPU utilization to 97%+ by eliminating data stalls. Keep your GPUs continuously fed with data

Deliver up to 4x training performance with significantly reduced I/O wait times, allowing your GPUs to operate at peak efficiency

Slash cloud costs and avoid redundant transfers with a software-only solution that utilizes your existing data lake storage

Request a demo to learn about how Alluxio can help your AI use case.

Why Alluxio for AI

Unlike legacy distributed file systems or general-purpose storage solutions, Alluxio is:

Caching, Not Storage

AI Native

Cloud and Storage Agnostic

Transparent & Developer Friendly

Not another Lustre, Ceph, or Weka.

Alluxio AI brings caching to the core of your existing AI data pipelines.

.png)