Sign up to the event

Thank you for registering for the webinar! You’ll receive the Zoom link via email shortly.

.png)

Events

Unlock the full performance of your AI/ML infrastructure on Oracle Cloud Infrastructure (OCI).

Join Oracle's Master Principal Cloud Architect Xinghong He and Alluxio's VP of Technology Bin Fan for an in-depth technical session exploring how modern tiered caching, optimized storage integration, and smart deployment choices can deliver sub-millisecond latency and up to 5× faster data access on OCI — at scale.

You'll learn about:

- Architectural insights: How Alluxio’s tiered caching architecture works with OCI Object Storage and BM.DenseIO compute instances to eliminate data access bottlenecks.

- Benchmark-proven results: See real MLPerf Storage 2.0 and Warp benchmark outcomes demonstrating sub-millisecond latency and dramatic throughput gains.

- Deployment strategies: Compare deployment options — dedicated mode for peak performance vs. co-located mode for cost-efficient scale.

- Practical, actionable guidance: Implementation best practices you can apply directly to your AI/ML workloads on OCI.

Fireworks AI is a leading inference cloud provider for Generative AI, powering real-time inference and fine-tuning services for customers' applications that require minimal latency, high throughput, and high concurrency. Their GPU infrastructure spans 10+ clouds and 15+ regions, serving enterprises and developers deploying production AI workloads at scale.

With model sizes reaching 70GB+, Fireworks AI faced critical challenges: eliminating cold start delays, managing highly concurrent model downloads across GPU clusters, reducing tens of thousands in annual cloud egress costs, and automating manual pipeline management that consumed 4+ hours weekly. They chose Alluxio as their solution to scale with their hyper-growth without requiring dedicated infrastructure resources.

In this tech talk, Fireworks AI Software Engineer Akram Bawayah and Bin Fan, VP of Technology at Alluxio, share how Fireworks AI uses Alluxio to power their multi-cloud inference infrastructure.

They discuss:

- How Fireworks AI uses Alluxio in its high-performance model distribution system to deliver fast, reliable inference across multiple clouds

- How implementing Alluxio distributed caching achieved 1TB/s+ model deployment throughput, reducing model loading from hours to minutes while significantly cutting cloud egress costs

- How to simplify infrastructure operations and seamlessly scale model distribution across multi-cloud GPU environments

Amazon S3 and other cloud object stores have become the de facto storage system for organizations large and small. And it’s no wonder why. Cloud object stores deliver unprecedented flexibility with unlimited capacity that scales on demand and ensures data durability out-of-the-box at unbeatable prices.

Yet as workloads shift toward real-time AI, inference, feature stores, and agentic memory systems, S3’s latency and limited semantics begin to show their limits. In this webinar, you’ll learn how to augment — rather than replace — S3 with a tiered architecture that restores sub-millisecond performance, richer semantics, and high throughput — all while preserving S3’s advantages of low-cost capacity, durability, and operational simplicity.

We’ll walk through:

- The key challenges posed by latency-sensitive, semantically rich workloads (e.g. feature stores, RAG pipelines, write-ahead logs)

- Why “just upgrading storage” isn’t sufficient — the bottlenecks in metadata, object access latency, and write semantics

- How Alluxio transparently layers on top of S3 to provide ultra-low latency caching, append semantics, and zero data migration with both FSx-style POSIX access and S3 API access.

- Real-world results: achieving sub-ms TTFB, >90% GPU utilization in ML training, 80X faster feature store query response times, and dramatic cost savings from reduced S3 operations

- Trade-offs, deployment patterns, and best practices for integrating this tiered approach in your AI/analytics stack

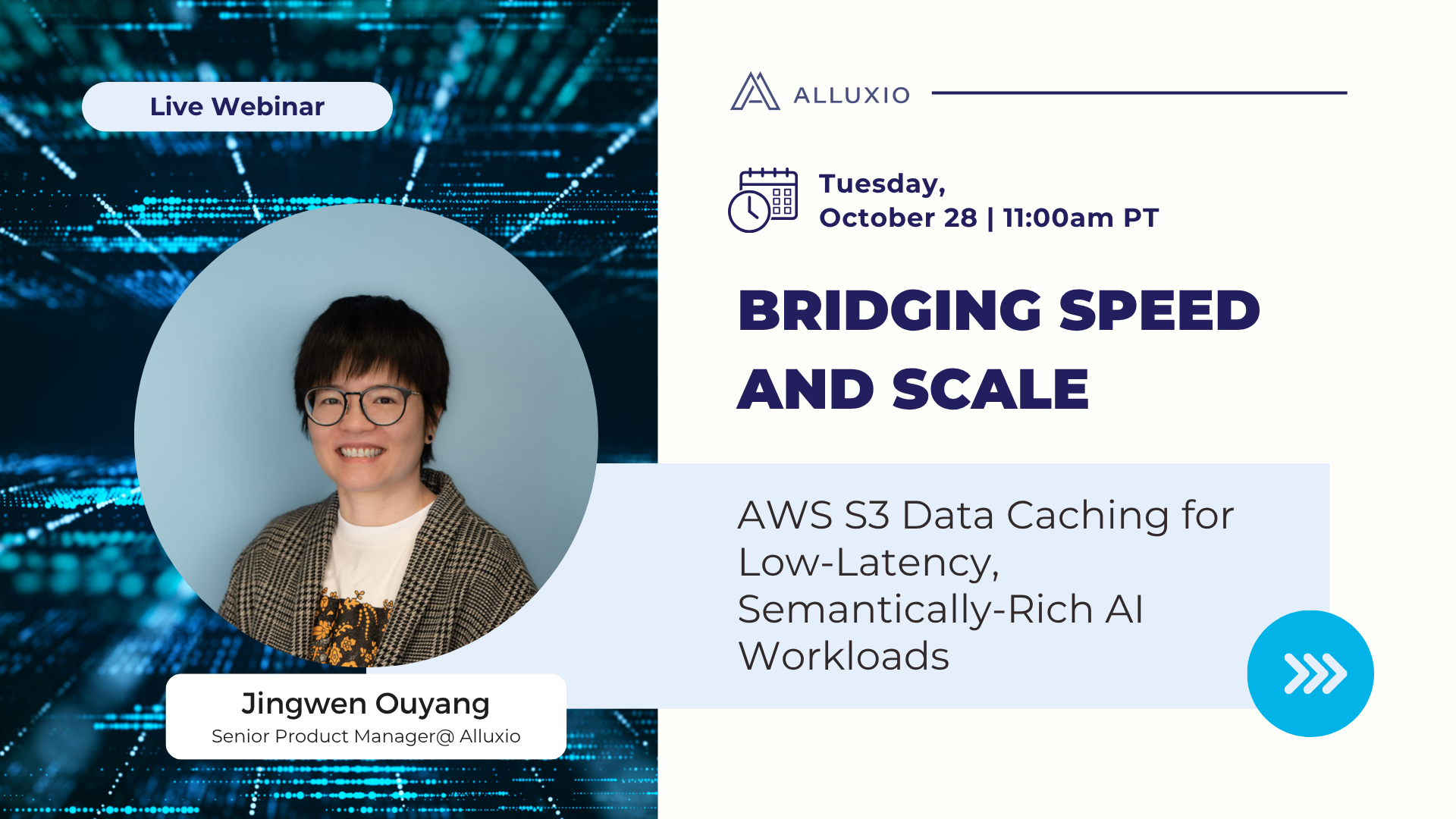

Speaker:

Jingwen Ouyang is a Senior Product Manager at Alluxio with over 10 years of diverse data experience. Previously, she has worked as a Data Engineer at Meta and SanDisk. Jingwen received her BS and MS of EECS from MIT. She’s also a proud mom of her 2-year-old border collie, a certified snowboard instructor, and has a strong passion for basketball.