DATA ORCHESTRATION SUMMIT

DECEMBER 8-9, 2020 | VIRTUAL

The virtual event for all building cloud-native data and AI platforms.

This is an open source community conference focused on the key data engineering challenges and solutions around building cloud-native data and AI platforms using latest technologies such as Alluxio, Apache Spark, Apache Airflow, Presto, Tensorflow, and Kubernetes. This Summit brings together data engineers, architects, cloud engineers, data scientists, and industry thought leaders who are solving data problems at the intersection of cloud, AI/ML, and data.

presentations anchor

PRESENTATIONS

DAY ONE | December 8, 2020

KEYNOTES

Presentation Slides >

The Pandemic Changes Everything, The need for speed and resiliency

Parviz Peiravi, Intel

CLOUD NATIVE JOURNEYS – MODERNIZING DATA PLATFORMS

Presentation Slides >

Building a high-performance platform on AWS to support real-time gaming services using Presto, Alluxio, and S3

Serena Wang, Electronic Arts

Presentation Slides >

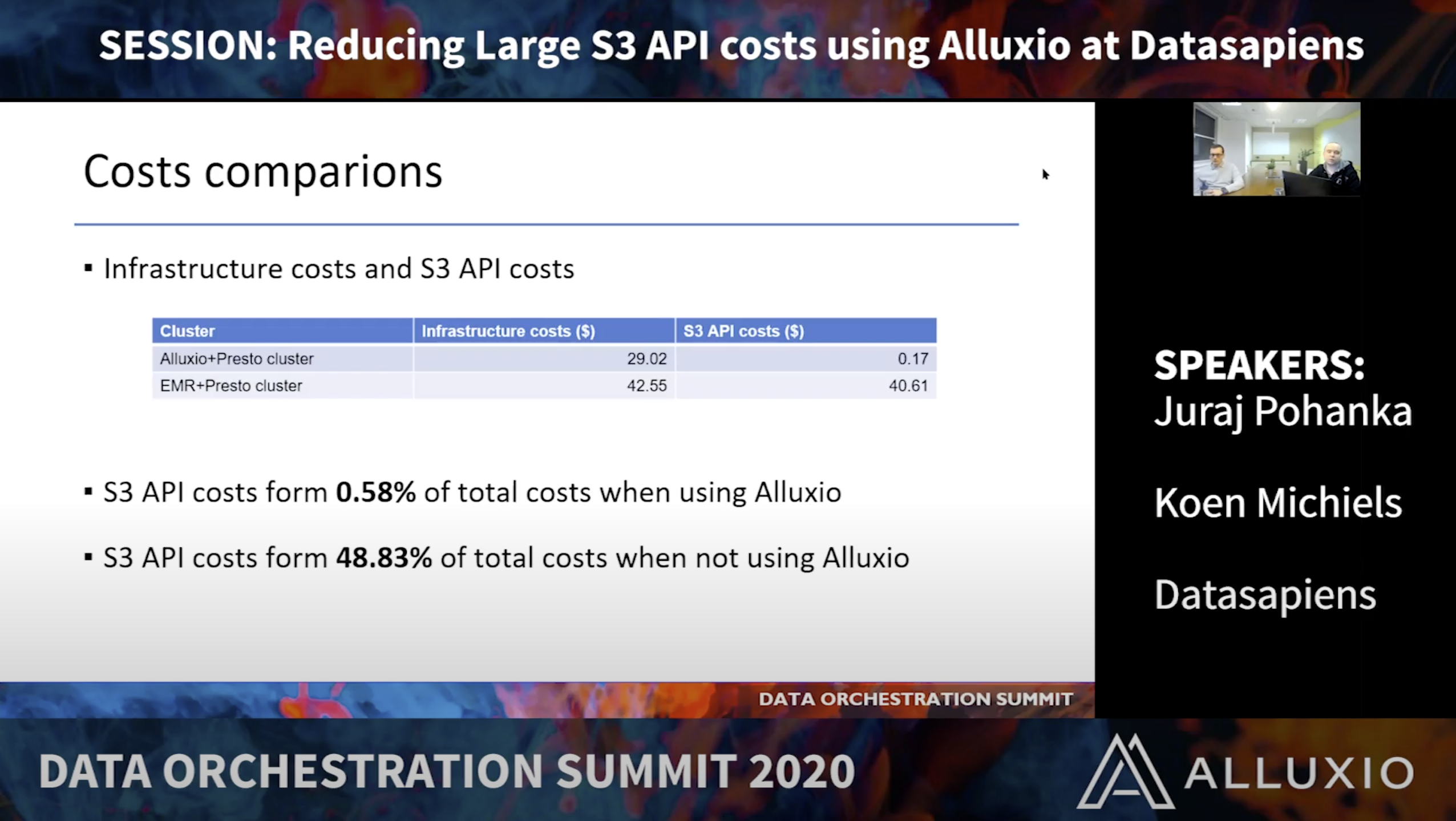

Reducing large S3 API costs using Alluxio at Datasapiens

Juraj Pohanka & Koen Michiels, Datasapiens

Presentation Slides >

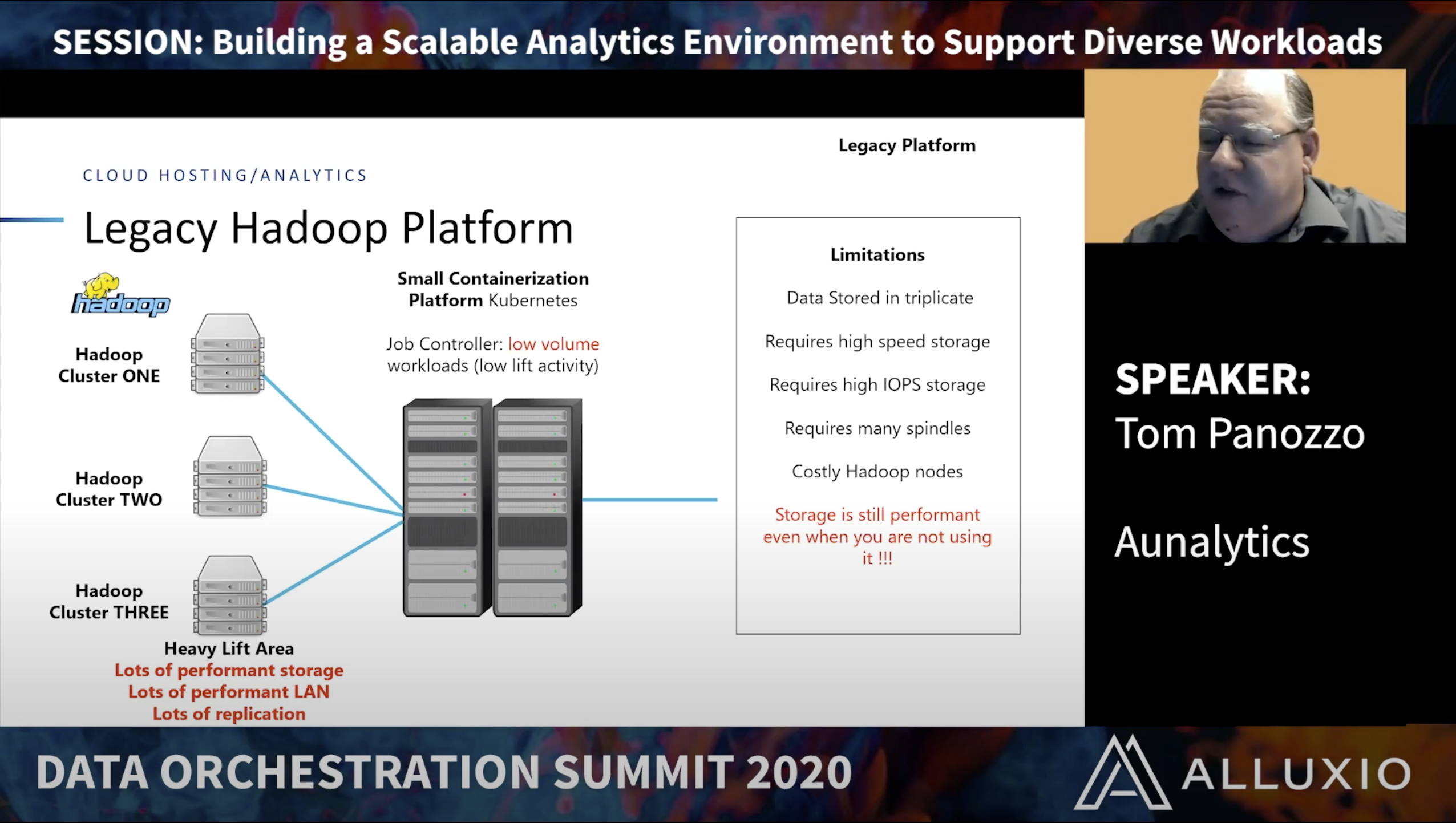

Building a Scalable Analytics Environment to Support Diverse Workloads

Tom Panozzo, Aunalytics

Presentation Slides >

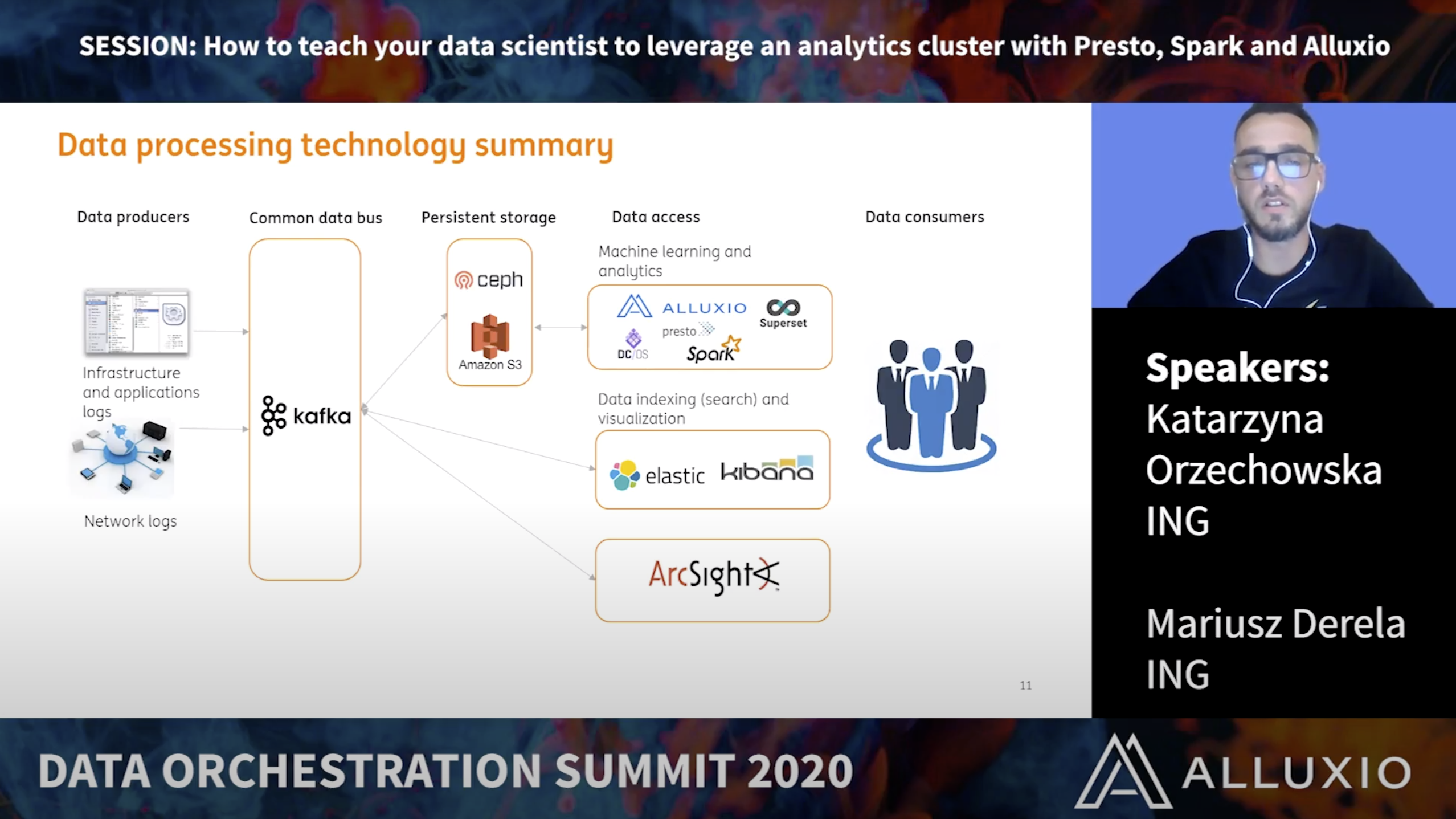

How to Teach Your Data Scientist to Leverage an Analytics Cluster with Presto, Spark, and Alluxio

Katarzyna Orzechowska & Mariusz Derela, ING Tech

HIGH PERFORMANCE SQL ANALYTICS

Presentation Slides >

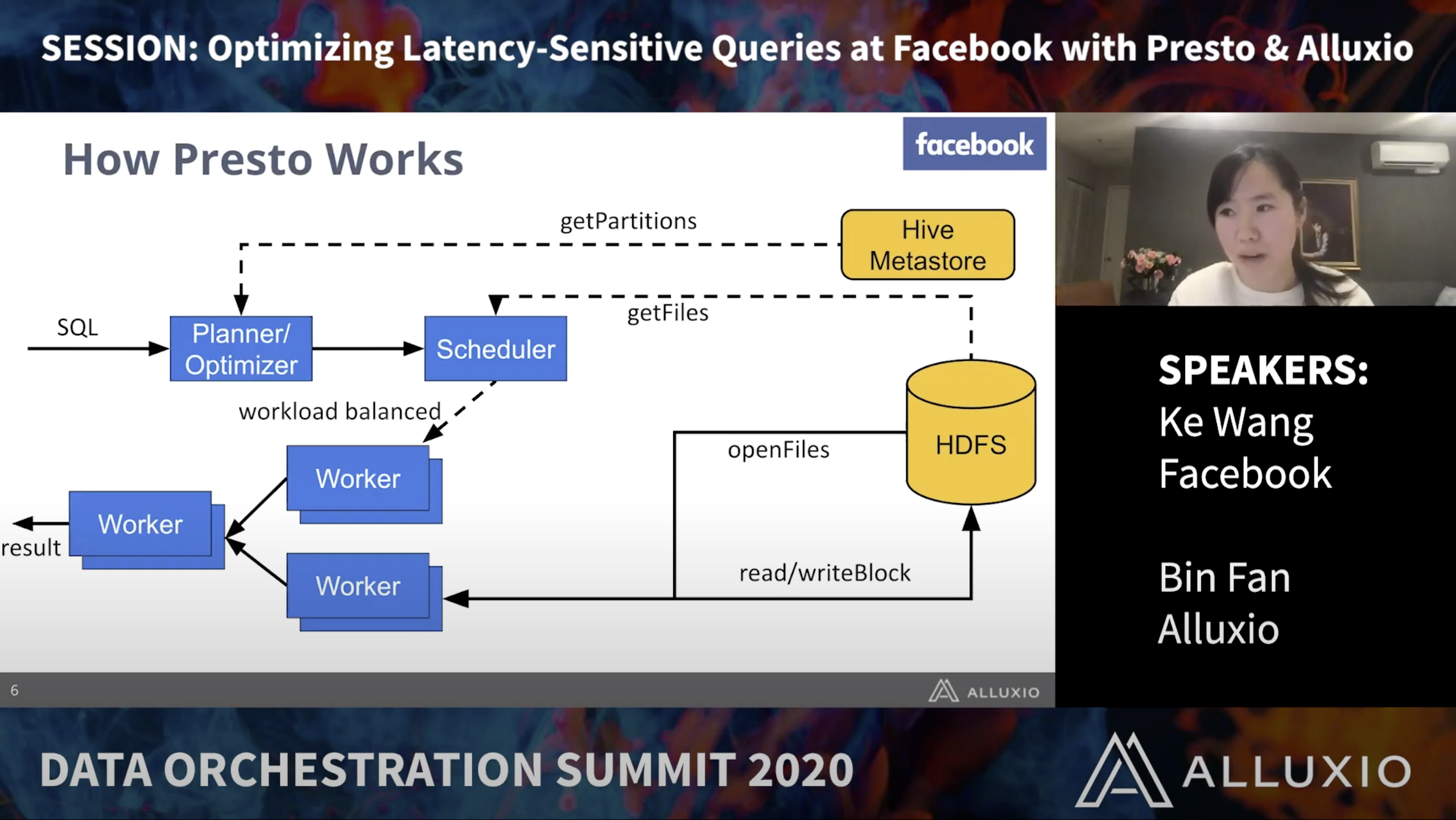

Optimizing Latency-sensitive queries for Presto at Facebook: A Collaboration between Presto & Alluxio

Ke Wang, Facebook & Bin Fan, alluxio

Presentation Slides >

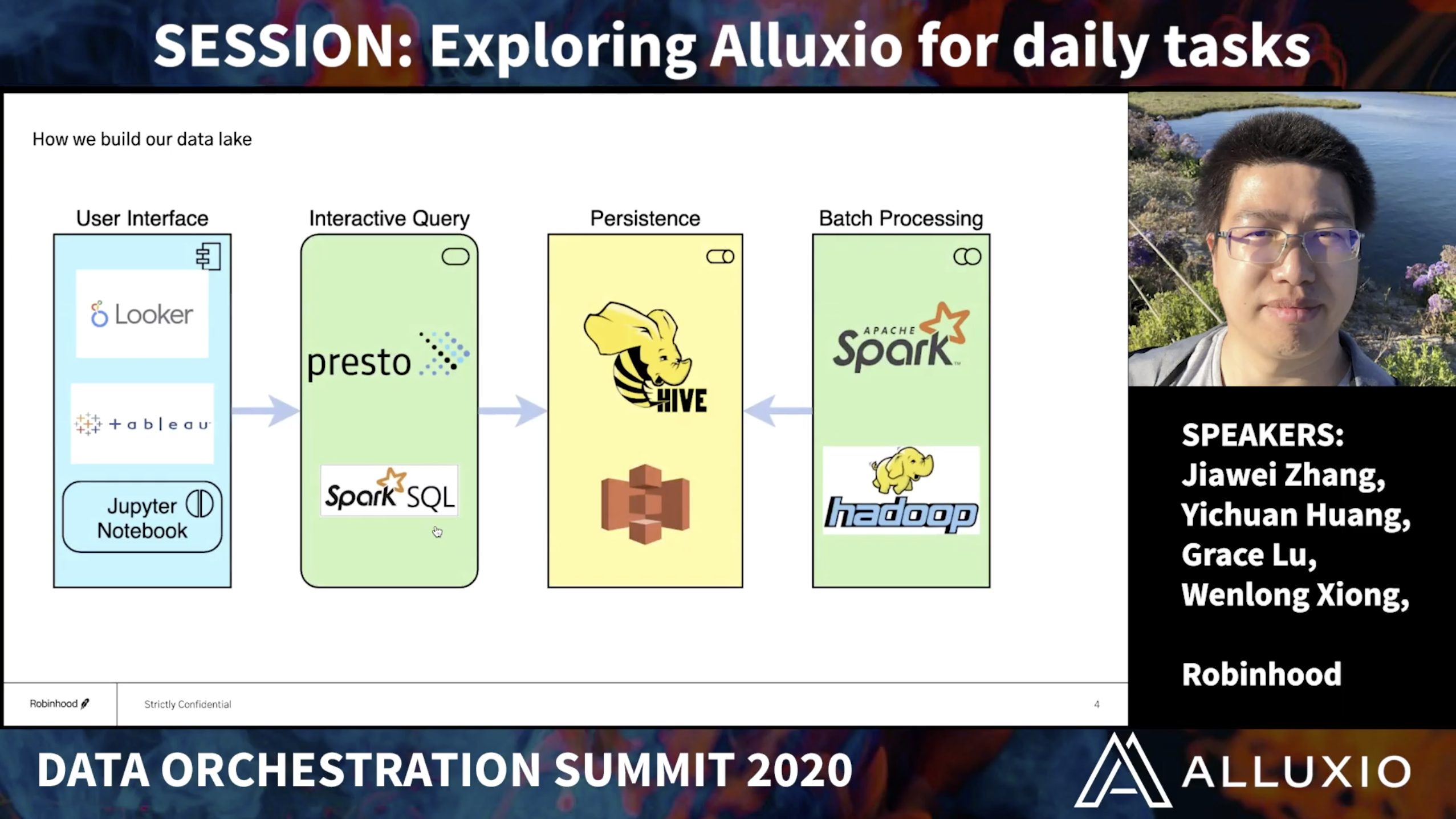

Exploring Alluxio for Daily Tasks at Robinhood

Jiawei Zhang, Yichuan Huang, Grace Lu, & Wenlong Xiong, Robinhood

Presentation Slides >

Presto: Fast SQL-on-anything across data lakes, DBMS, and NoSQL Data stores

Kamil Bajda-Pawlikowski, Starburst Data

Presentation Slides >

High Performance Data Lake with Apache Hudi and Alluxio at T3Go

Trevor Zhang, T3Go

Presentation Slides >

Speeding Up Spark Performance using Alluxio at China Unicom

Ce Zhang, China Unicom

DAY TWO | December 9, 2020

KEYNOTES

Presentation Slides >

Modernizing Global Shared Data Analytics Platform and our Alluxio Journey

Sandipan Chakraborty, Rakuten

Hybrid Cloud Analytics & AI

Presentation Slides >

Securely Enhancing Data Access in Hybrid Cloud with Alluxio

Mike Fagan & Prashant Khanolkar, Comcast

Presentation Slides >

Bursting on-premise analytic workloads to Amazon EMR using Alluxio

Roy Hasson, AWS

Presentation Slides >

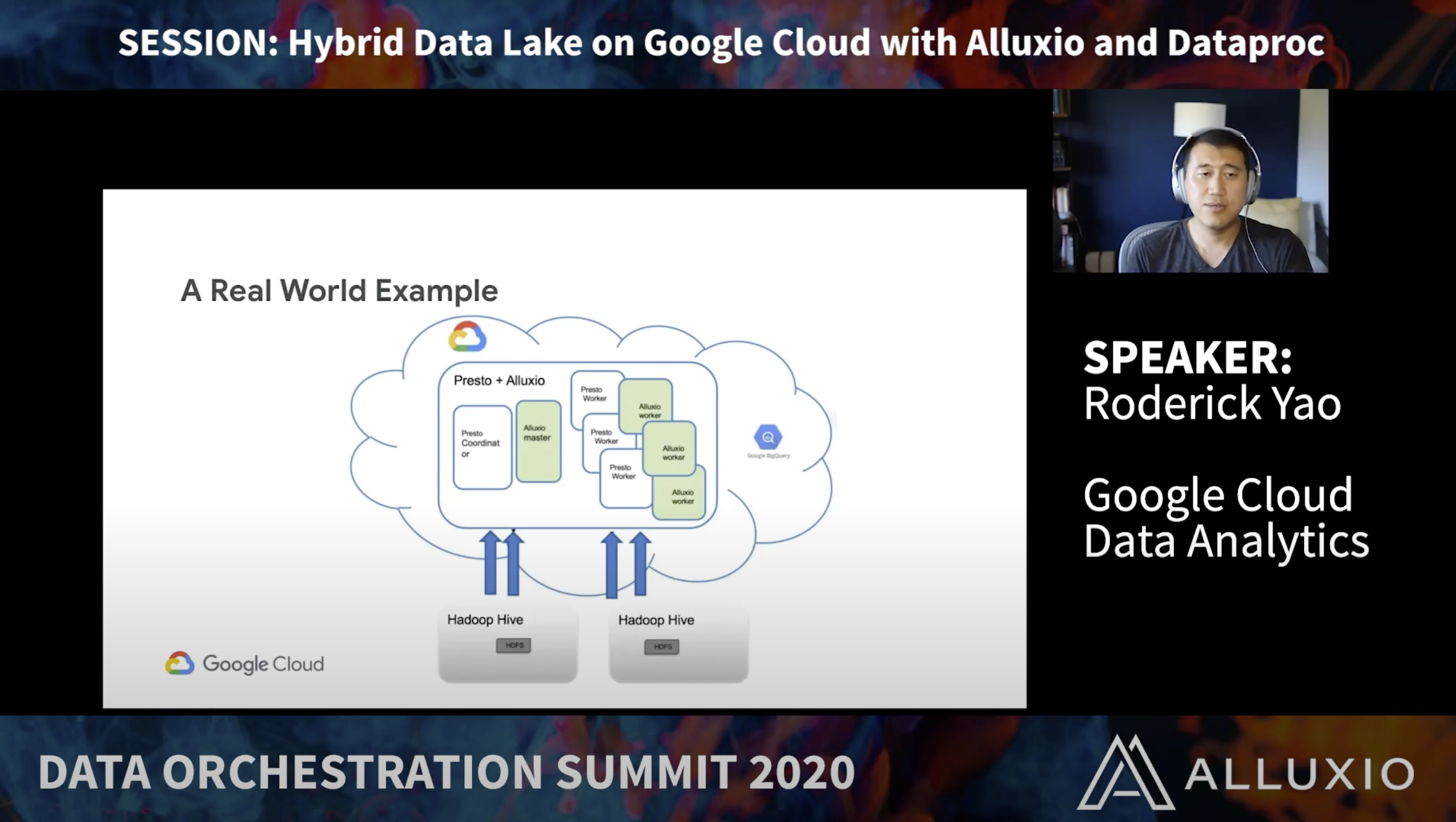

Hybrid Data Lake on Google Cloud with Alluxio and Dataproc

Roderick Yao, Google Cloud

Presentation Slides >

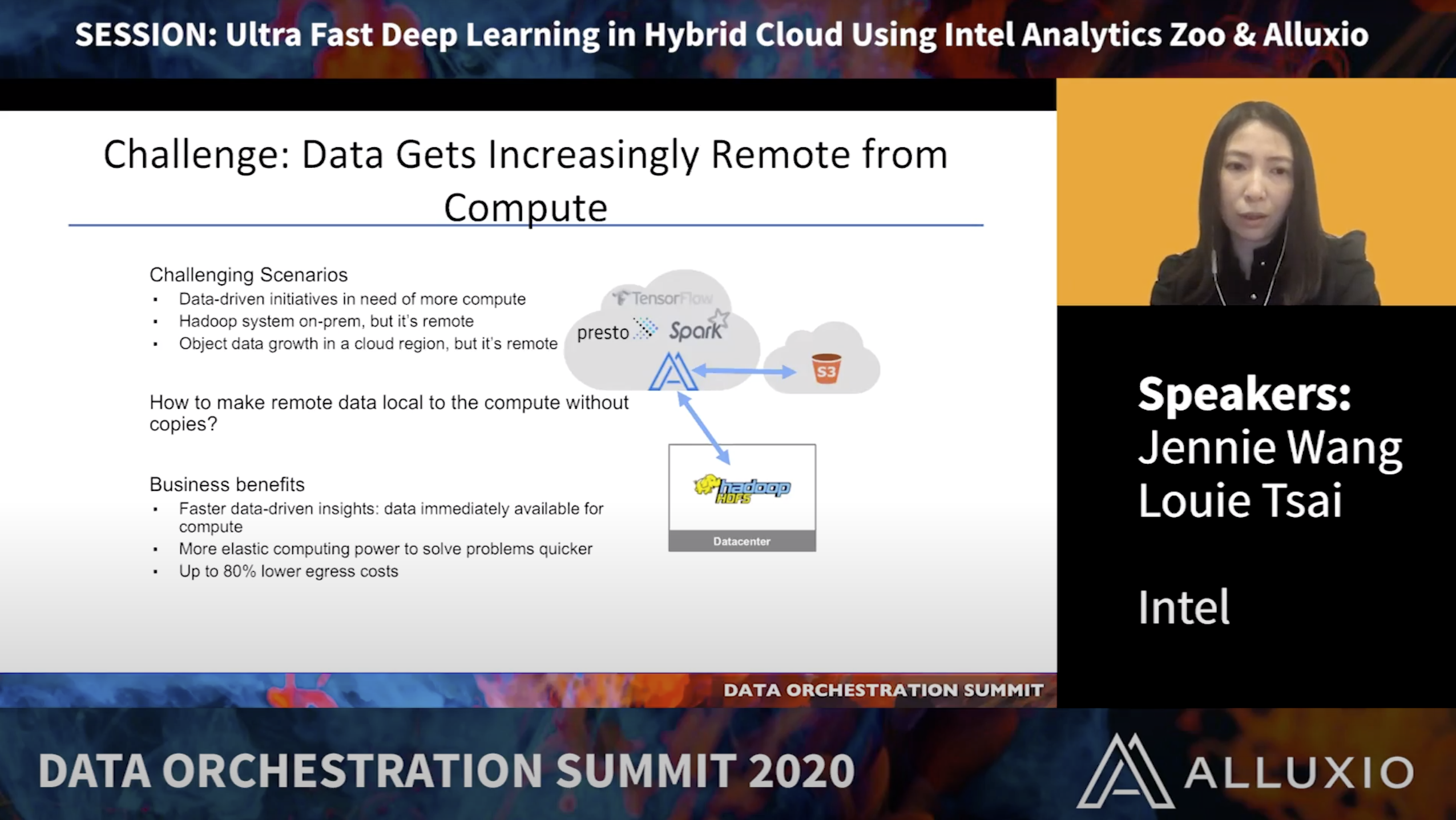

Ultra Fast Deep Learning in Hybrid Cloud using Intel Analytics Zoo & Alluxio

Jennie Wang & Tsai Louie, Intel

Orchestrating Data for Machine Learning

Presentation Slides >

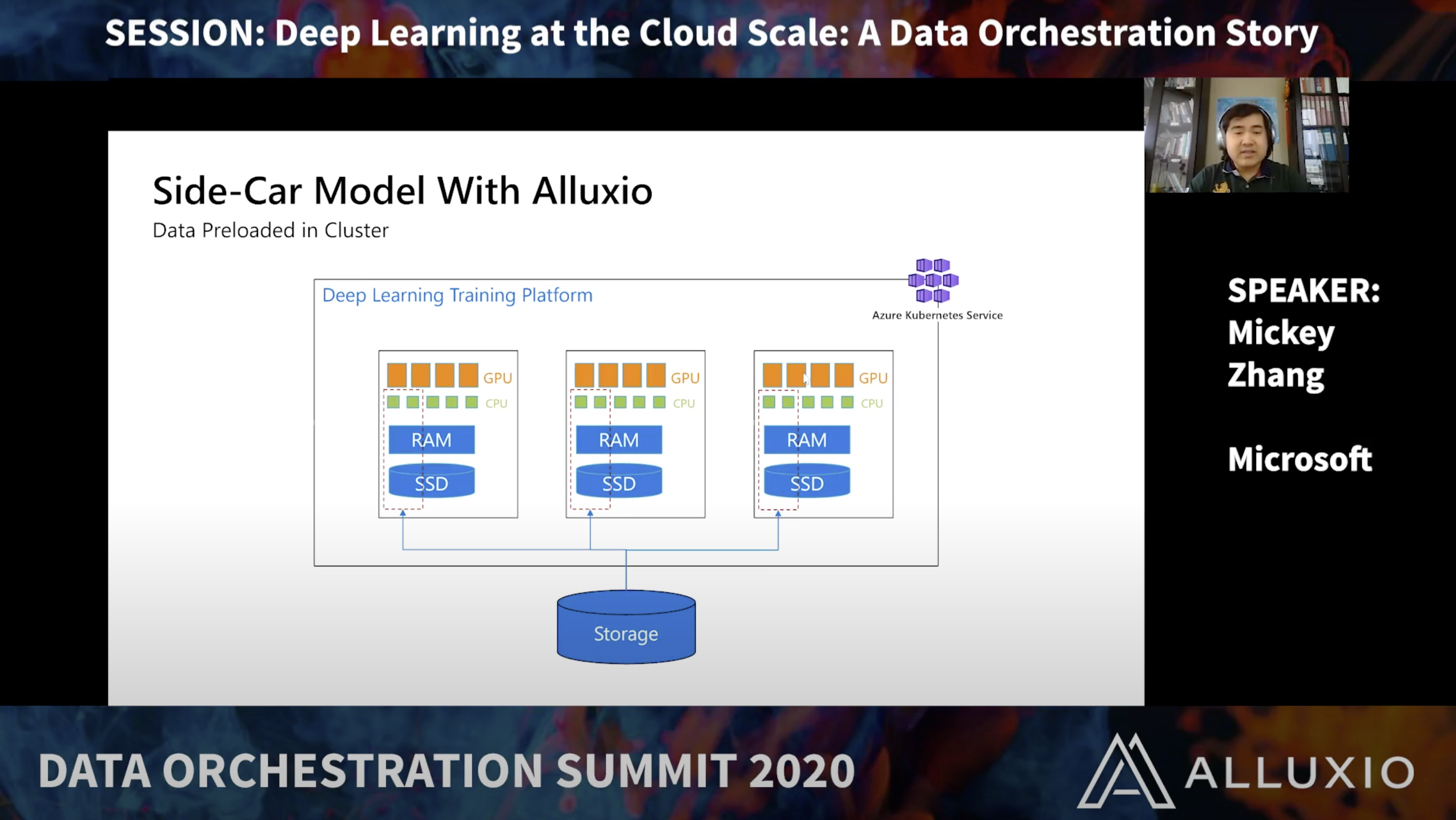

Deep Learning in the Cloud at Scale: A Data Orchestration Story

Mickey Zhang, Microsoft

Presentation Slides >

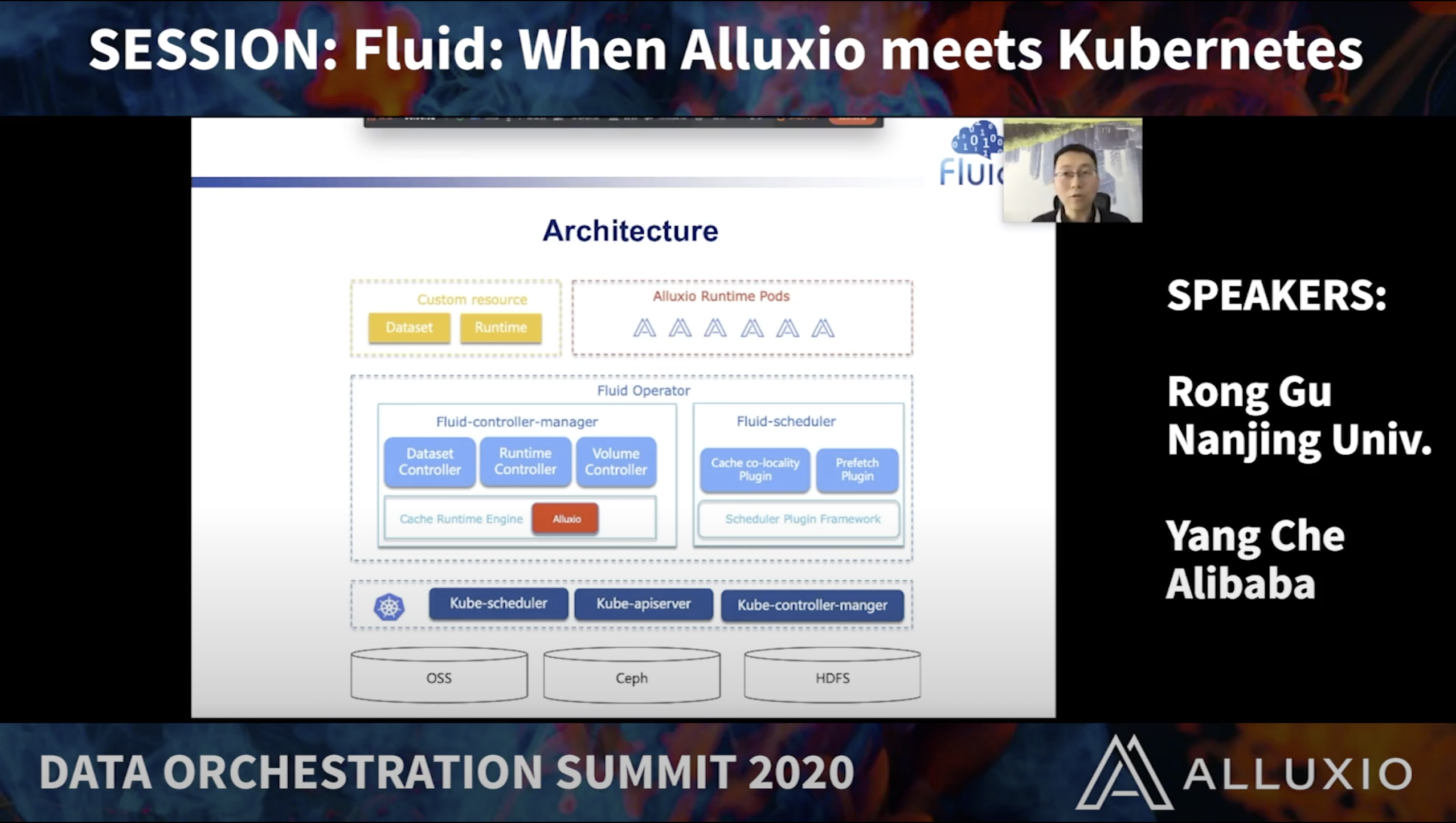

Speeding Up Atlas Deep Learning Platform with Alluxio + Fluid

Yuandong Xie, Unisound

Presentation Slides >

The Hidden Engineering Behind Machine Learning Products at Helixa

Gianmario Spacagna, Helixa

High Performance SQL Analytics

Presentation Slides >

Achieving Massive Concurrency and Sub-second query latency on Cloud warehouses and data lakes with kyligence cloud

George Demarest, Kyligence

Presentation Slides >

Accelerating Data Computation on Ceph Objects using Alluxio

Leonardo Militano, Zurich University

Presentation Slides >

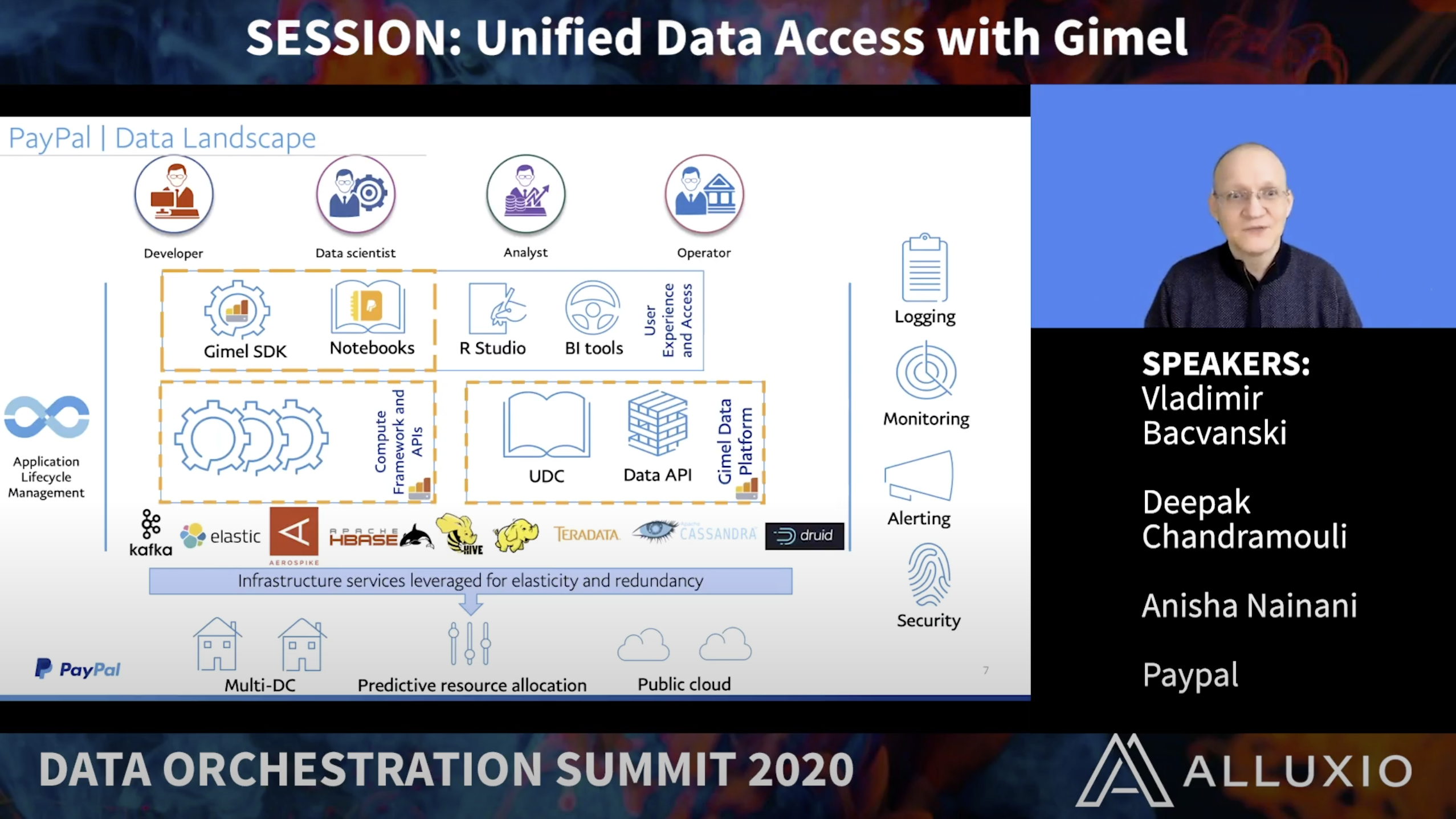

Unified Data Access with Gimel

Deepak Chandramouli, Anisha Nainani, & Dr. Vladimir Bacvanski, Paypal

Presentation Slides >

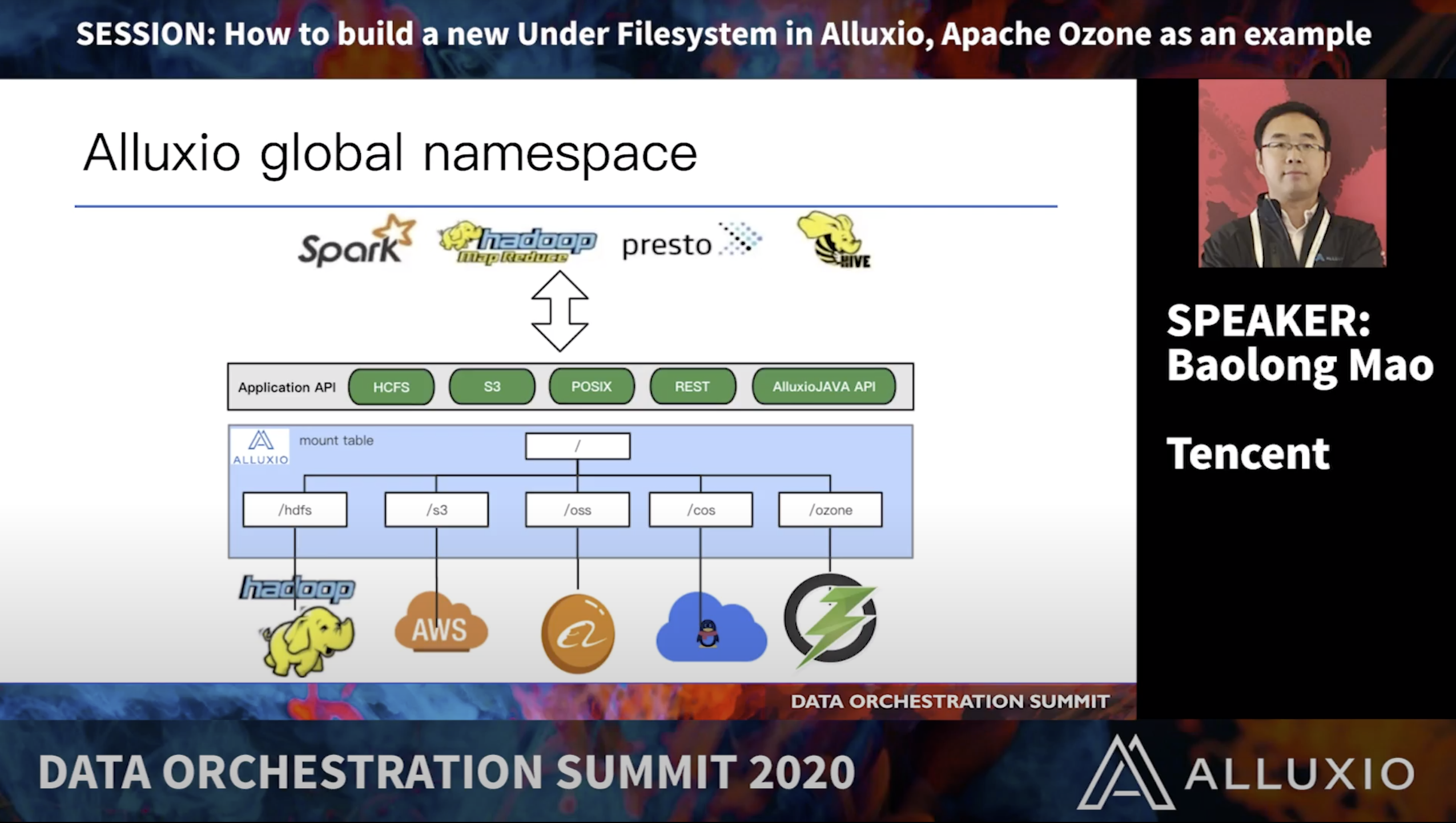

How to Build a new under filesystem in Alluxio: Apache Ozone as an example

Baolong Mao, Tencent

Presentation Slides >

The practice of Presto & Alluxio in E-commerce big data platform

Wenjun Tao, JD.com

Speakers Anchor

SPEAKERS

ion Stoica

RiseLab

Professor, EECS Dept. at UC Berkeley

Haoyuan Li

Alluxio

Founder, CEO

Ke Wang

Software engineer

Mike Fagan

Comcast

Distinguished Architect

Sandipan Chakraborty

Rakuten

Director of Engineering

Katarzyna Orzechowska

ING

Data Scientist

Serena Wang

Electronic Arts

Software Engineer

Mickey Zhang

Microsoft

Software Engineer

Jiawei Zhang

Robinhood

Data Infrastructure Engineer

Roderick Yao

Google Cloud

Strategic Cloud Engineer

parviz Peiravi

Intel

Global CTO/Principle Engineer

Roy Hasson

AWS

Sr. Manager, Analytics and Datalakes

Calvin Jia

Alluxio

Founding Engineer, Product

Anisha Nainani

PayPal

Sr. Software engineer

Yang Che

Alibaba

Staff Engineer

Kamil Bajda-Pawlikowski

Starburst

Co-founder & CTO

Jennie wang

Intel

Software Engineer

Deepak Chandramouli

PayPal

Engineering Lead

Baolong Mao

Tencent

Sr. System Engineer

Louie Tsai

Intel

Software engineer

Vladimir Bacvanski

PayPal

Principal Architect

Juraj Pohanka

Datasapiens

CTO

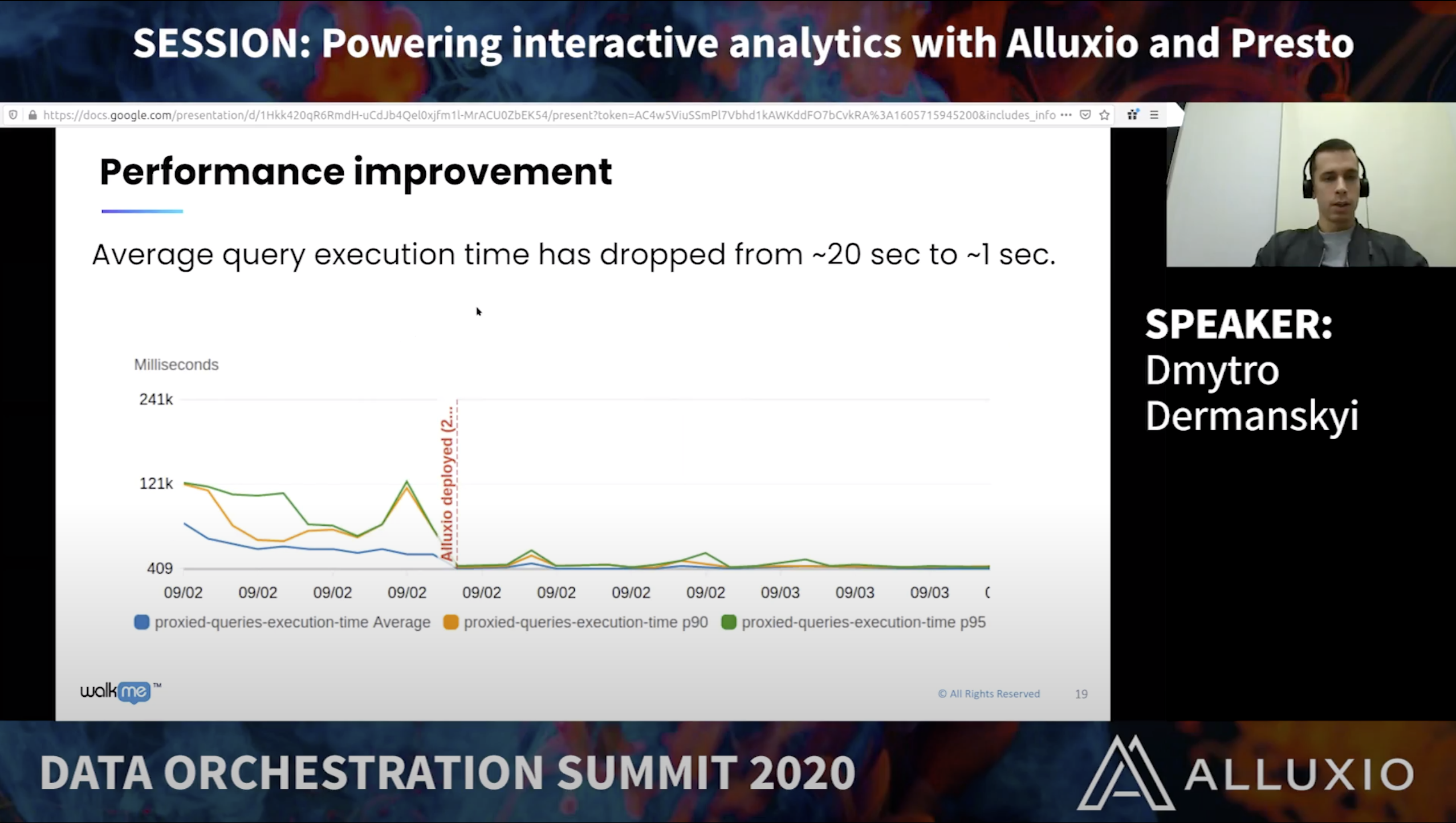

Dmytro Dermanskyi

WalkMe

Data Engineering Lead

George Demarest

Kyligence

Head of Marketing

Ce Zhang

China Unicom

Big Data Engineer

Koen Michiels

Datasapiens

CEO & Co-Founder

Davy Wang

Tencent

Chief Solutions Architect

Tom Panozzo

Aunalytics

Chief Technology Officer

Bin Fan

Alluxio

Founding Engineer, VP of Open Source

Yichuan Huang

Robinhood

Data Platform engineer

Adit Madan

Alluxio

Product Manager

Rong Gu

Nanjing University

Ph.D., Associate Research Professor

Mariusz Derela

ING Bank

DevOps Engineer

Wenjun Tao

JD.com

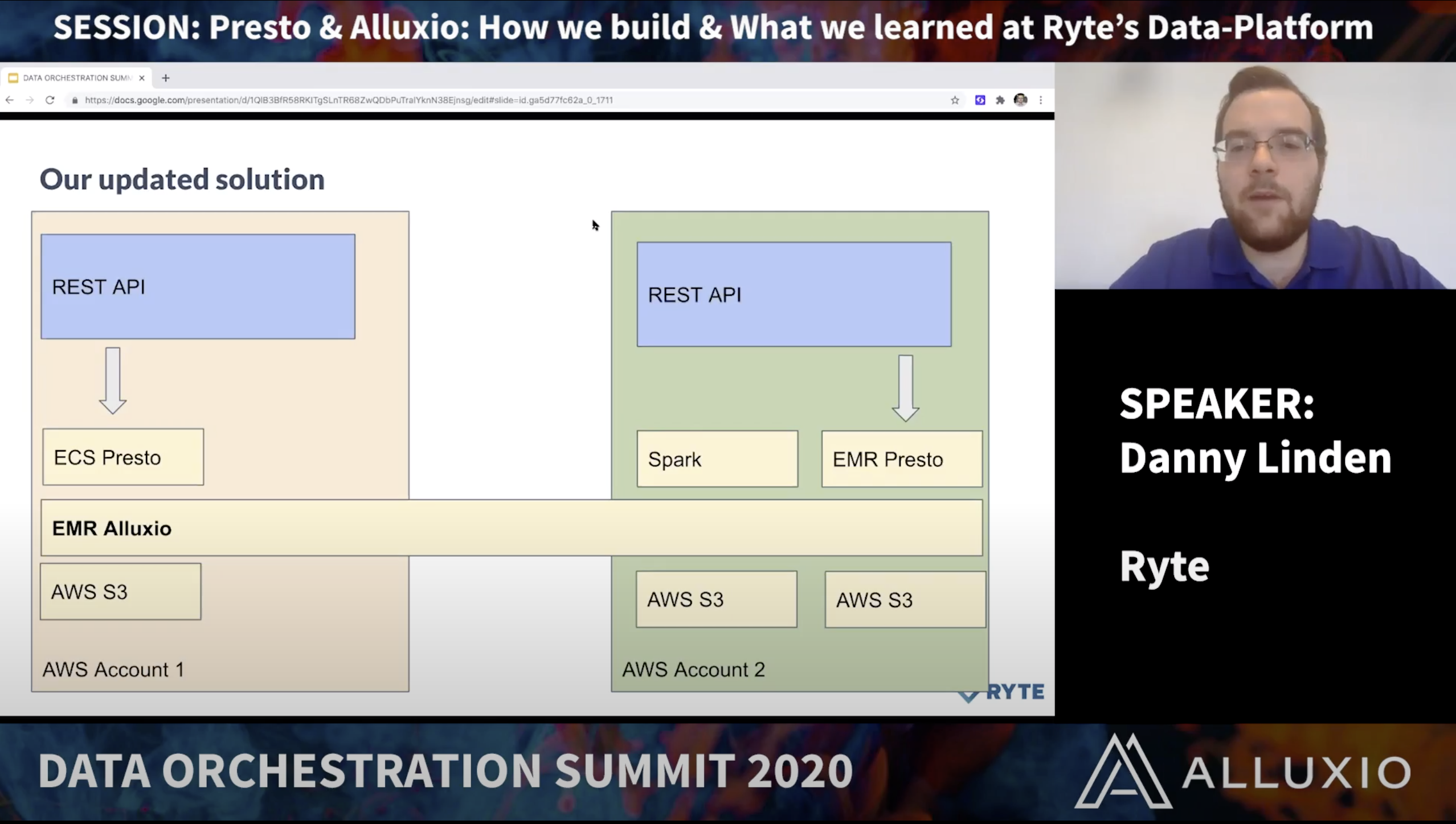

Danny Linden

Ryte

Chapter Lead Software Engineer

Trevor Zhang

T3Go

Big Data Sr. Engineer

Gianmario Spacagna

Helixa

Chief Scientist, Head of AI

Leonardo Militano

Zurich University

Senior Researcher

Yuandong Xie

Unisound

Platform Researcher

Yu Jin

Electronic Arts

Sr. Engineering Manager

Schedule Anchor