Caching frequently used data in memory is not a new computing technique, however it is a concept that Alluxio has taken to the next level with the ability to aggregate data from multiple storage systems in a unified pool of memory. Alluxio capabilities extend further to intelligently managing the data within that virtual data layer. Tiered locality uses awareness of network topology and configurable policies to manage data placement for performance and cost optimizations. This feature is particularly useful with cloud deployments across multiple availability zones. It can also be useful for cost savings in environments where cross-zone or cross-location traffic is more expensive than intra-zone data traffic.

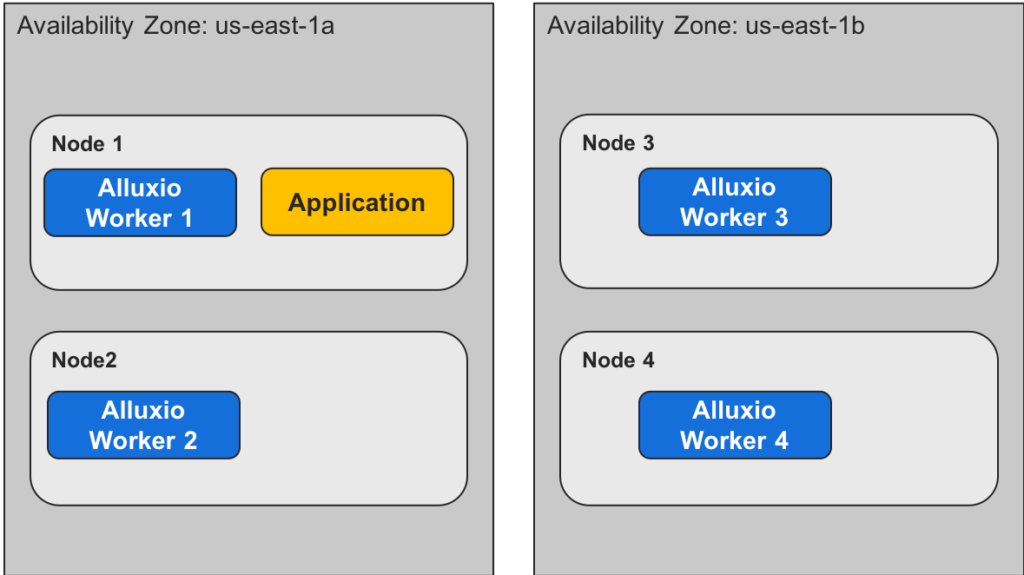

Here is a simple scenario where Alluxio will use network topology information to prefer more local reads and writes with Alluxio workers in two different AWS Availability Zones.

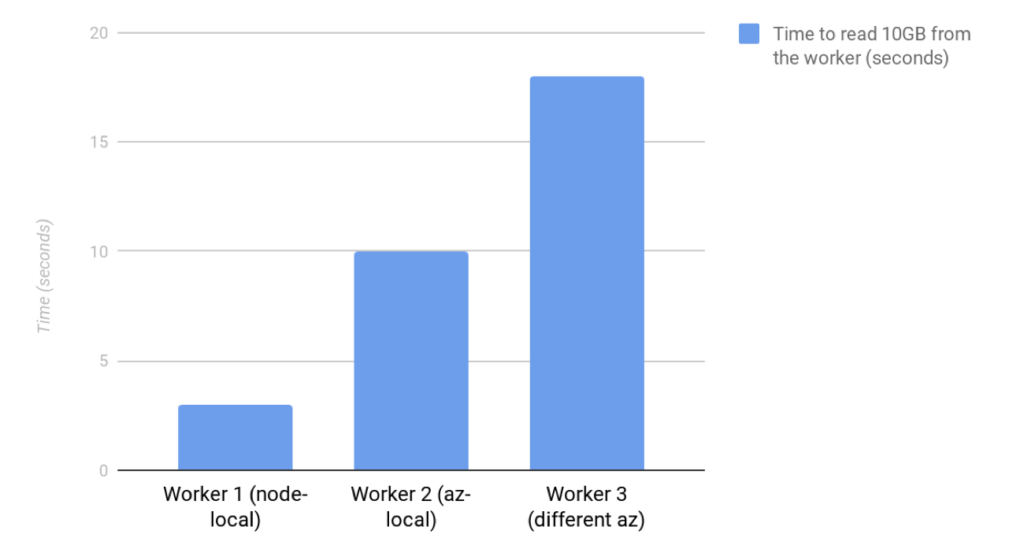

Unsurprisingly, performance is fastest when reading from the local Alluxio worker and slows when read from a non-local worker. The performance difference between worker 2 and worker 3 is due to the difference in bandwidth between Availability Zones (AZs). Worker 2 is in the same AZ as the application with about 10 gigabits per second of bandwidth. Reading from worker 3 is slower because the bandwidth across AZs is only about 5 gigabits per second.

Without tiered locality, the application is just as likely to read from worker 2 as worker 3 if both workers have the data cached. Configuring tiered locality gives a preference for worker 2 and faster performance. The situation is similar for writes. When applications write data through Alluxio, they will prefer to write to more-local workers for improved performance.

Configuration

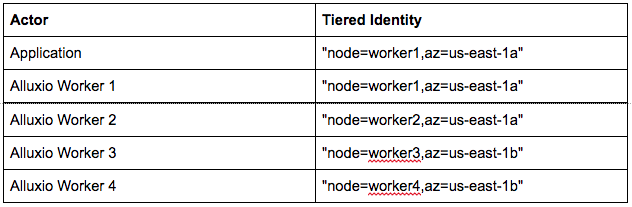

To enabled tiered locality, and the associated performance benefit, every actor (clients and workers) must be configured to know its tiered identity. Tiered identity is a mapping from locality tier (e.g. Availability Zone) to the value for that tier (e.g. us-east-1a). For the above cluster setup example, the tiered identities would be:

Configure with alluxio-site.properties

The simplest way to configure tiered locality is to use alluxio-site.properties to set it like any other. Please refer to: https://docs.alluxio.io/os/user/1.8/en/Overview.html

Properties for Application, Worker 1, and Worker 2:

alluxio-site.properties

alluxio.locality.az="us-east-1a"

alluxio.locality.order="node,az" # custom locality hierarchy

Properties for Worker 3 and Worker 4

alluxio-site.properties

alluxio.locality.az="us-east-1b"

alluxio.locality.order="node,az" # custom locality hierarchy

We set “alluxio.locality.order” to introduce the “az” locality tier and show its order in the locality hierarchy. By default the locality tiers are “node,rack”. Note that we don’t need to explicitly configure “node” identity because it is determined automatically via localhost lookup.

Configure with alluxio-locality.sh

When the cluster is set up automatically, or there are many workers, it can be convenient to set the locality information via script instead of using a static value in alluxio-site.properties. If a script exists at ${ALLUXIO_HOME}/conf/alluxio-locality.sh, it will be executed to determine tiered identity.

alluxio-locality.sh

#!/bin/bash

echo "az=$(curl -s http://169.254.169.254/latest/meta-data/placement/availability-zone)"

Custom Tiers

In this example we used the tiers “node” and “az”, but the tier configuration is fully customizable, so you can use whatever tiers make sense for your deployment, e.g. “rack”, “zone”, “region”, etc. Just make sure each region is contained within the next in “alluxio.locality.order”, since locality decisions prefer to match in the earliest tier possible.

Interaction with Location Policies

In addition to tiered locality, Alluxio has a concept of using a location policy during reads and writes to help select the worker to use. The policies interact with tiered locality in different ways.

- LocalFirstPolicy: Choose a worker that matches in the most local tier possible

- LocalFirstAvoidEvictionPolicy: Like LocalFirstPolicy, but avoiding eviction is given a higher priority than locality.

- MostAvailableFirstPolicy: Unaffected by locality information

- RoundRobinPolicy: Unaffected by locality information

- SpecificHostPolicy: Unaffected by locality information

Cluster Partitioning with Strict Locality

Alluxio Enterprise Edition adds an additional capability: strict locality tiers. Users can define a tier to be “strict”, meaning that no traffic is allowed between actors that don’t match in the tier. This will partition the cluster into zones which handle data independently, sharing only metadata. For the availability zone example, this would mean that applications in us-east-1a will only communicate with workers in us-east-1a. Even if data is cached on a worker in us-east-1b, an application in us-east-1a will prefer to read the data from the UFS, caching it to a worker in us-east-1a.

Strict locality is necessary when cross-zone traffic is impossible, e.g. to comply with security or other data policies.

To configure strict locality for the “az” tier in enterprise edition, set:

alluxio.locality.az.strict=true

Conclusion

When clusters have non-uniform networking capabilities, it makes sense to configure Alluxio tiered locality to improve performance. It can also save on cost in environments where cross-zone network transfer is more expensive than intra-zone data transfer.