Happy New Year! We wish our community contributors and users a successful 2022! Thank you in advance for your continued support and contributions. As an open source community, we will continue to share our data orchestration knowledge to help more organizations solve their data challenges. This newsletter features popular engineering blogs on metadata sync and machine learning (ML) topics, Microsoft Bing use case, and a conversation with UC Berkeley AMPLab pioneers.

HIGHLIGHTS

A Year with Alluxio Community 2021

2021 marked accelerated growth for the Alluxio Open Source Project. We are tremendously grateful for what the community has achieved together in this past year. Check out highlights on community growth and activities in 2021.

Virtual Product School | Architecting a Heterogeneouas Data Platform Across Clusters, Regions, and Clouds

January27,10:00 AM PT

Alluxio foresaw the need for agility when accessing data across silos separated from compute engines like Spark, Presto, TensorFlow and PyTorch. Embracing the separation of storage from compute, the Alluxio data orchestration platform simplifies adoption of the data lake and data mesh paradigm for analytics and AI/ML. In this webinar, Alluxio’s Sr. Product Manager, Adit Madan, will share observations to help you identify ways to use the platform to meet the needs of your data environment and workloads. If you were unable to attend the live event, sign up to receive the exclusive webinar replay.

Pioneer Dialogue | Haoyuan Li & Ion Stoica: Next Stop for Big Data

During the recent Huatai Securities Fintech Investment Summit, Alluxio’s founder, Haoyuan Li, invited his former Ph.D. advisor, Prof. Ion Stoica, co-director of the UC Berkeley AMPLab and co-founder of Databricks, to discuss the future of big data development and open source technology. In addition, they also shared their accomplishments at AMPLab.

Got a tech question for the Alluxio Community? Chat with us on Slack!

ENGINEERING BLOGS

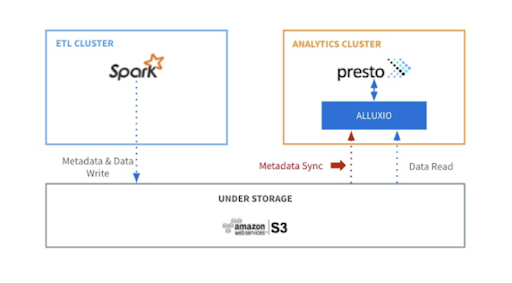

Metadata Synchronization in Alluxio: Design, Implementation and Optimization

Metadata Analytics Storage

Metadata sync is one of the most critical features to ensure users always retrieve the latest version of data from Alluxio. Check out the latest blog by our engineering manager, David Zhu, introduces the design, implementation, and optimization of metadata sync in Alluxio.

Alluxio’s Machine Learning Model Training Series

ML DL Architecture

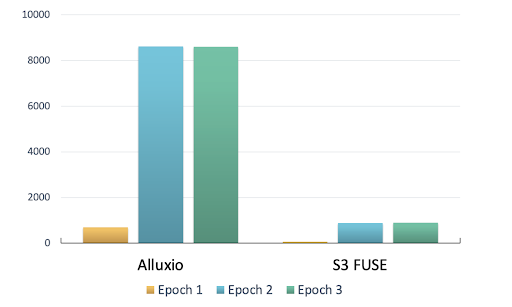

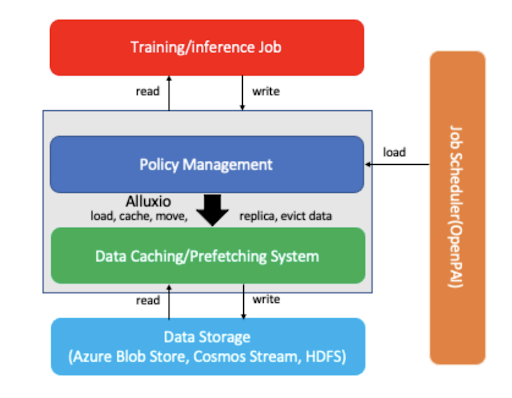

The 3-part series by our VP of Open Source, Bin Fan, and Machine Learning Engineer, Lu Qiu, provided a comprehensive overview of running machine learning on Alluxio, including the solution, architecture, and benchmark. Several leading companies, including Microsoft, Momo, Tencent, Unisound, and more, adopt Alluxio into their machine learning platforms.

Speed up Large-scale ML/DL Offline Inference Jobs with Alluxio at Microsoft Bing

Microsoft Inference job

Running inference at scale is challenging. In this blog, our users Software Engineer Binyang Li and Sr. Research Software Engineer Qianxi Zhang shared their observations and the practice of using Alluxio to speed up the I/O performance for large-scale ML/DL offline inference at Microsoft Bing. Check out their user story.

CONTRIBUTOR SPOTLIGHT

Baolong Mao is a software Engineer at Tencent. He is the creator and tech lead of Tencent Alluxio OTeam, focusing on helping Tencent to improve performance on big data and ML workloads, besides, he loves to contribute to open source communities and help to share the experience of best practice and the technology of Alluxio core. Baolong has been a PMC member of Alluxio Open Source Project, and is recently promoted as a PMC maintainer of the project given his contributions!

Be our stargazers on GitHub ⭐

If you like our product, please give it a star on GitHub, and keep our open source community more popular!

FREE RESOURCES

Whitepaper | Presto with Alluxio Overview – Architecture Evolution for Interactive Queries

Whitepaper | Accelerating Machine Learning / Deep Learning in the Cloud: Architecture and Benchmark

HOT JOBS

With the recent complete Series C investment, Alluxio will continue fueling its rapid growth by investing in expanded product capabilities as well as scaling go-to-market and engineering operations across the globe. Check out over 20 job opportunities here.

Solutions Engineer | CA, United States

Solutions Engineer | United Kingdom